The Model Too Dangerous to Release

OpenAI withheld its own language model from the public, igniting a firestorm over AI transparency and safety.

Wikimedia Commons / Public domain

Due to concerns about large language models being used to generate deceptive, biased, or abusive language at scale, we are only releasing a much smaller version of GPT-2.

— OpenAI

The Model Too Dangerous to Release (2019)

In 2019, OpenAI made headlines by withholding the full release of GPT-2, a groundbreaking language model, due to concerns over potential misuse.

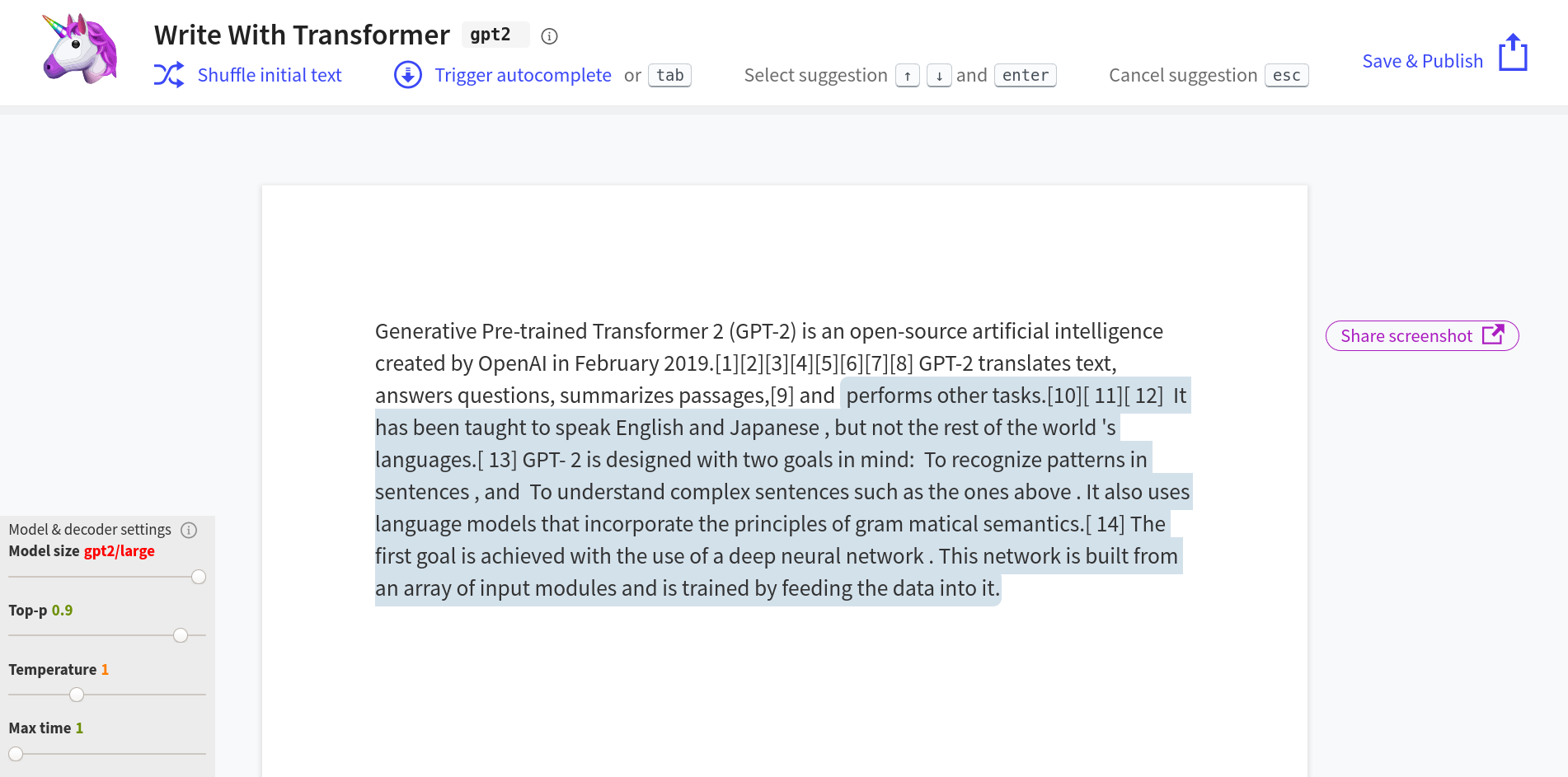

What happened: In February 2019, OpenAI introduced GPT-2, a large language model with 1.5 billion parameters, trained on 8 million web pages. The model demonstrated impressive capabilities in generating human-like text, translating languages, and answering questions. However, OpenAI decided to release only a smaller version of the model, citing fears that the full model could be misused for disinformation and spam. This decision was made by key figures at OpenAI, including Alec Radford, Jeff Wu, Ilya Sutskever, and Dario Amodei. The full model was eventually released on November 5, 2019, but the initial staged release marked a significant moment in AI ethics. GPT-2: 1.5B Release

Why it matters: This was the first time a major AI lab refused to publish a fully trained model, setting a precedent for ‘responsible disclosure’ in the AI community. It sparked debates about the balance between open research and the potential risks of misuse, influencing future discussions on the release of powerful AI models.

Further reading:

Why This Mattered

OpenAI's staged release of GPT-2 was the first time a major AI lab refused to publish a fully trained model, citing fears of misuse for disinformation and spam. The decision polarized the research community and established 'responsible disclosure' as a lasting norm in AI, foreshadowing every debate about open vs. closed models that followed.