Ilya Sutskever

Transformer model and RNN

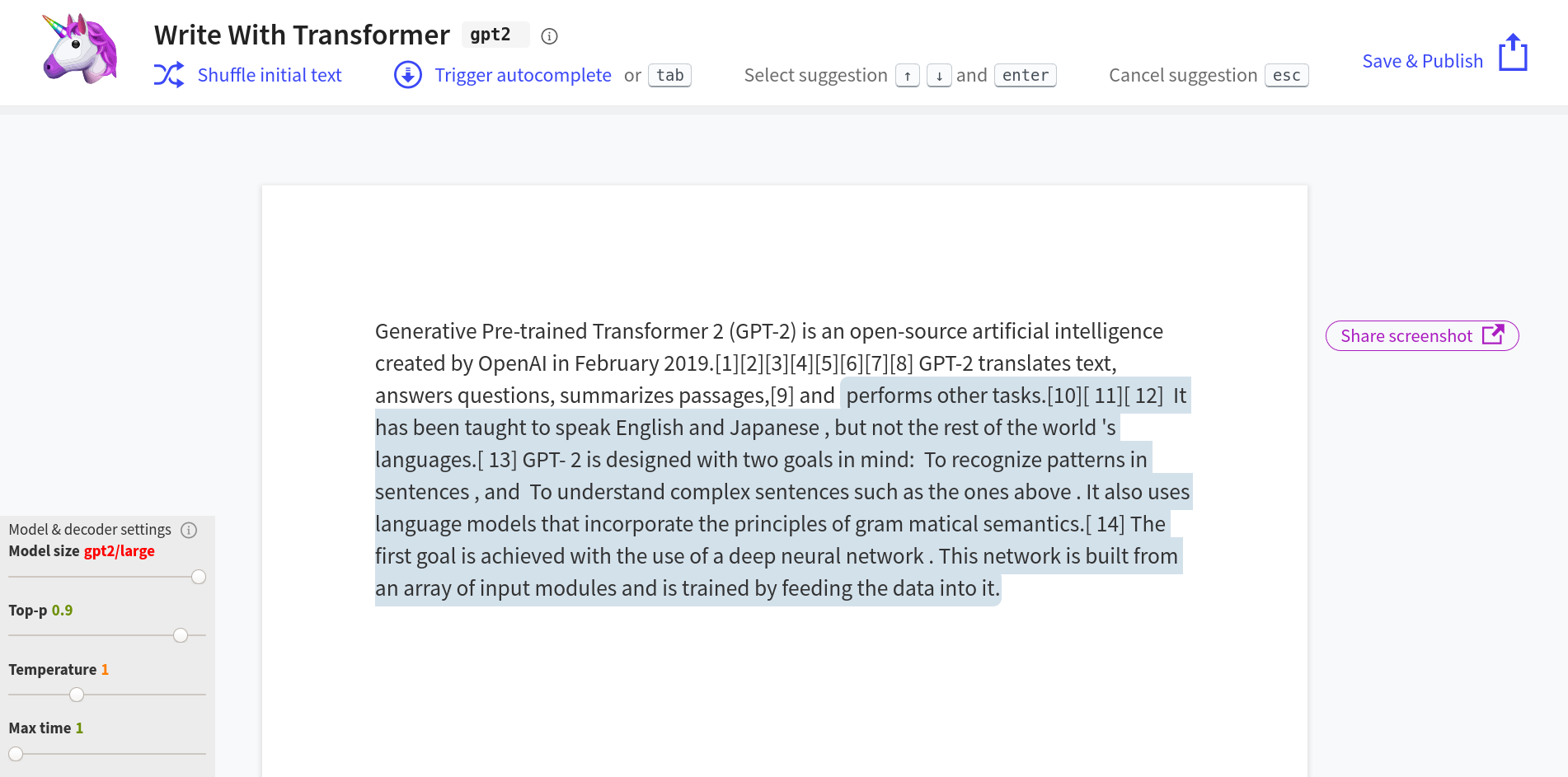

- Co-developed the Transformer model, a key advance in the field of natural language processing.

- Contributed to the development of recurrent neural networks (RNNs) for sequence prediction.

- Former research director at OpenAI, where he played a crucial role in advancing AI research.

Ilya Sutskever is an Israeli-Canadian computer scientist specializing in machine learning and deep learning, making significant contributions to the field.

Milestones

-

The Deep Learning Revolution Research

The Deep Learning Revolution ResearchA deep neural network obliterated the ImageNet competition by such a staggering margin that it forced an entire field to abandon its old methods overnight.

-

The Deep Learning Revolution Policy

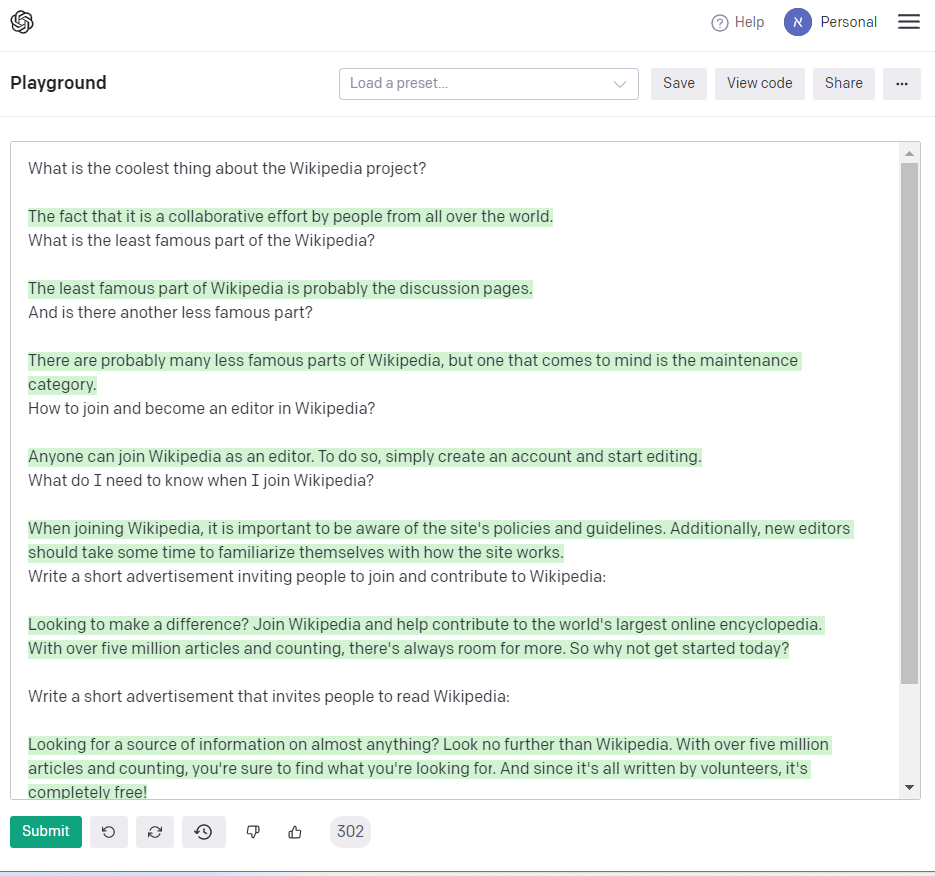

The Deep Learning Revolution PolicyOpenAI withheld its own language model from the public, igniting a firestorm over AI transparency and safety.

-

The Age of Foundation Models Research

The Age of Foundation Models ResearchOpenAI's GPT-3 demonstrated that scaling up language models could produce emergent abilities no one explicitly programmed, igniting the foundation model era.

-

The Age of Foundation Models Commercial

OpenAI released ChatGPT on November 30, 2022, and it reached 100 million users in just two months, making it the fastest-growing consumer application in history.