The Book That Killed Neural Networks

Minsky and Papert's rigorous mathematical critique of perceptrons convinced a generation of researchers to abandon neural networks, triggering a funding drought that lasted over a decade.

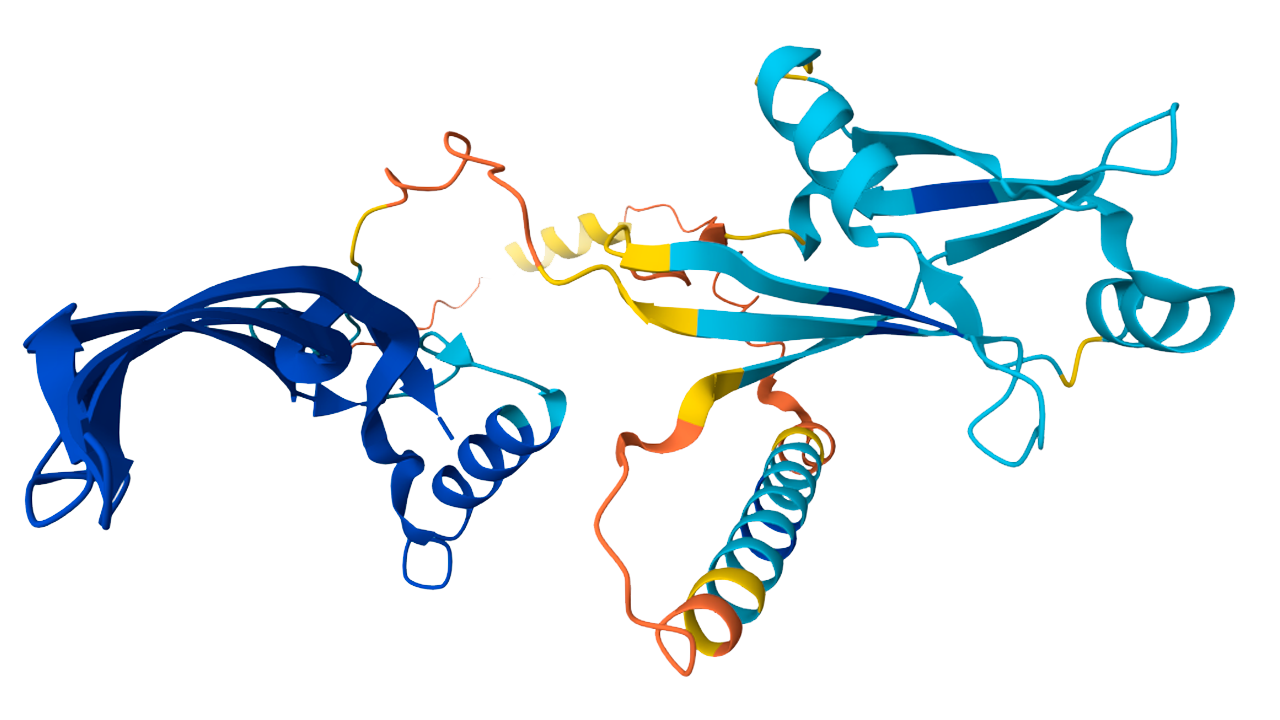

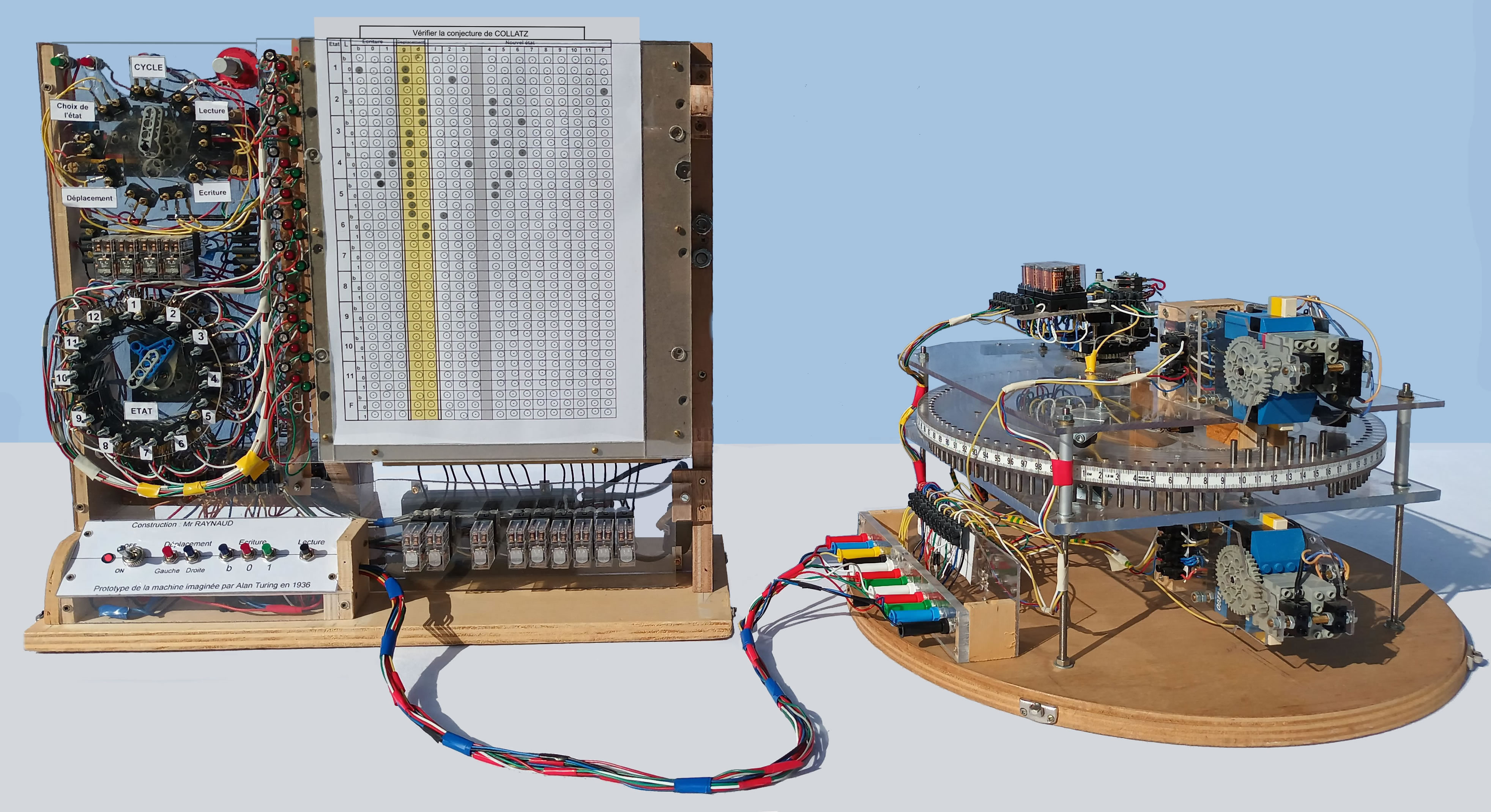

MartinThoma / CC BY 3.0

The question is whether [perceptrons] have enough power to justify serious investment of intellectual effort… the answer is essentially negative.

— Marvin Minsky and Seymour Papert, Perceptrons (1969)

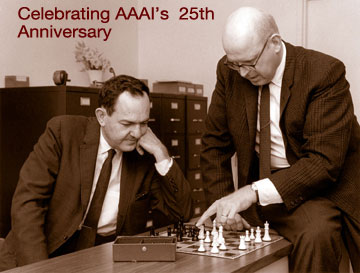

In 1969, Marvin Minsky and Seymour Papert published “Perceptrons,” a book that inadvertently set back the progress of neural networks for nearly two decades.

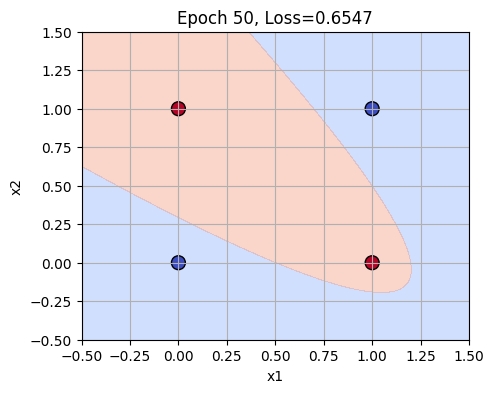

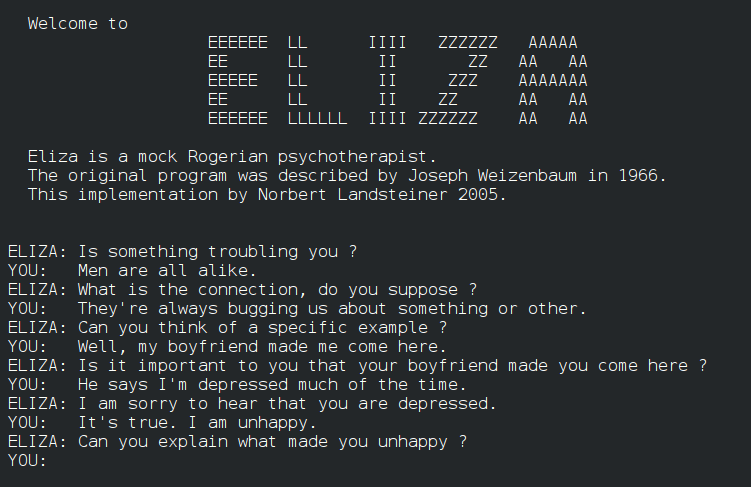

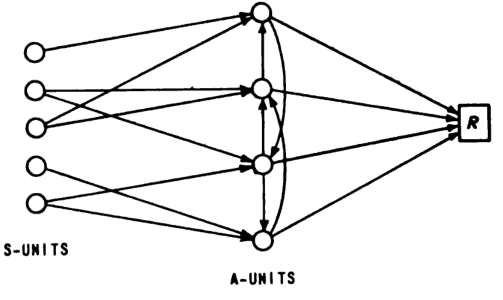

What happened: In 1969, Marvin Minsky and Seymour Papert published “Perceptrons: An Introduction to Computational Geometry,” critically analyzing the limitations of single-layer neural networks, or perceptrons. They demonstrated that these networks could not solve certain simple problems, such as the XOR problem, and incorrectly extended this limitation to multi-layer networks. The book was dedicated to psychologist Frank Rosenblatt, who had developed the first model of a perceptron in 1957. Perceptrons (book)

Why it matters: “Perceptrons” redirected AI funding away from neural networks towards symbolic methods, leading to what is known as the “AI Winter.” It wasn’t until the backpropagation revival of the 1980s that the limitations Minsky and Papert had identified were overcome, proving that deeper architectures could indeed solve complex problems. This book played a significant role in shaping the direction of AI research for nearly two decades.

Further reading:

Why This Mattered

Perceptrons demonstrated that single-layer networks could not solve simple problems like XOR, and implied — incorrectly, many later argued — that multi-layer networks were equally hopeless. The book redirected AI funding away from neural networks and toward symbolic methods for nearly two decades, until the backpropagation revival of the 1980s proved that deeper architectures could overcome the limitations Minsky and Papert had identified.