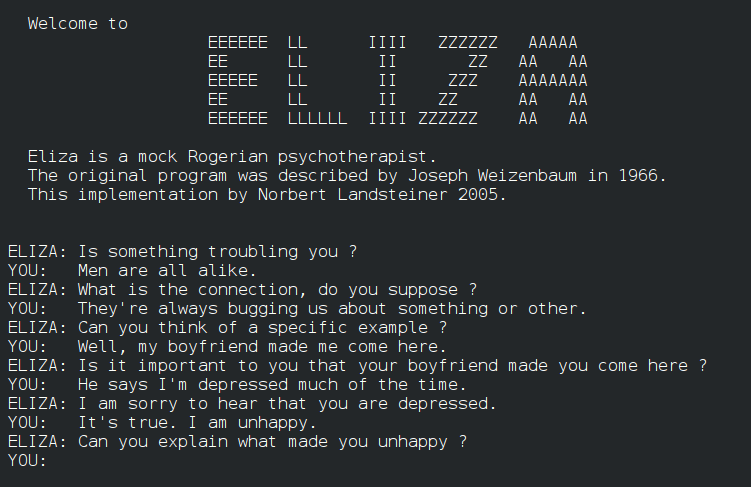

The Program That Understood a Tiny World Perfectly

Terry Winograd's SHRDLU could hold a conversation about colored blocks — and fooled everyone into thinking language was solved.

In some ways the program was too successful. It gave the impression that the problems of language understanding had been solved, when in fact they had barely been touched.

— Terry Winograd

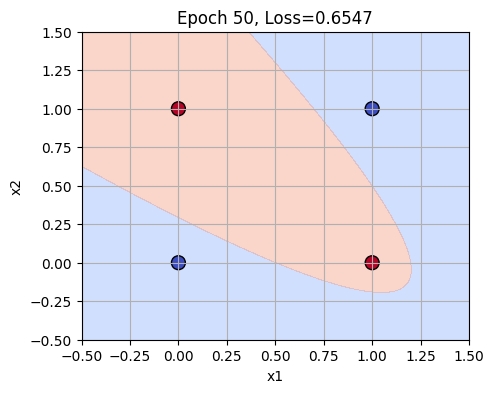

In 1970, MIT researcher Terry Winograd unveiled SHRDLU, a groundbreaking natural language processing program that could understand and respond to human commands in a simulated blocks world. SHRDLU - Wikipedia demonstrated that computers could engage in fluent English dialogue, picking up and moving blocks based on user instructions. While its success was impressive, SHRDLU’s limitations to a toy domain foreshadowed the challenges of scaling natural language understanding to real-world applications.

SHRDLU’s demonstration in 1970 was pivotal in showcasing the potential of AI to process human language, but it also highlighted the significant hurdles in creating systems that could understand and interact with the complexity of the real world. This lesson has influenced the direction of AI research ever since.

Further reading:

Why This Mattered

SHRDLU demonstrated fluent English dialogue within a simulated blocks world, letting users type commands like 'pick up the big red block' and ask follow-up questions. Its stunning demos convinced many that natural language understanding was nearly solved — but its success hid the fact that the approach could never scale beyond a toy domain, a lesson that would haunt AI for decades.