The Doctor Program That Outperformed Doctors

A Stanford AI diagnosed blood infections more accurately than most physicians — then was quietly shelved because no one knew who to blame if it was wrong.

The question is not whether a computer can be made to perform a task as well as a physician, but whether physicians and patients will accept its doing so.

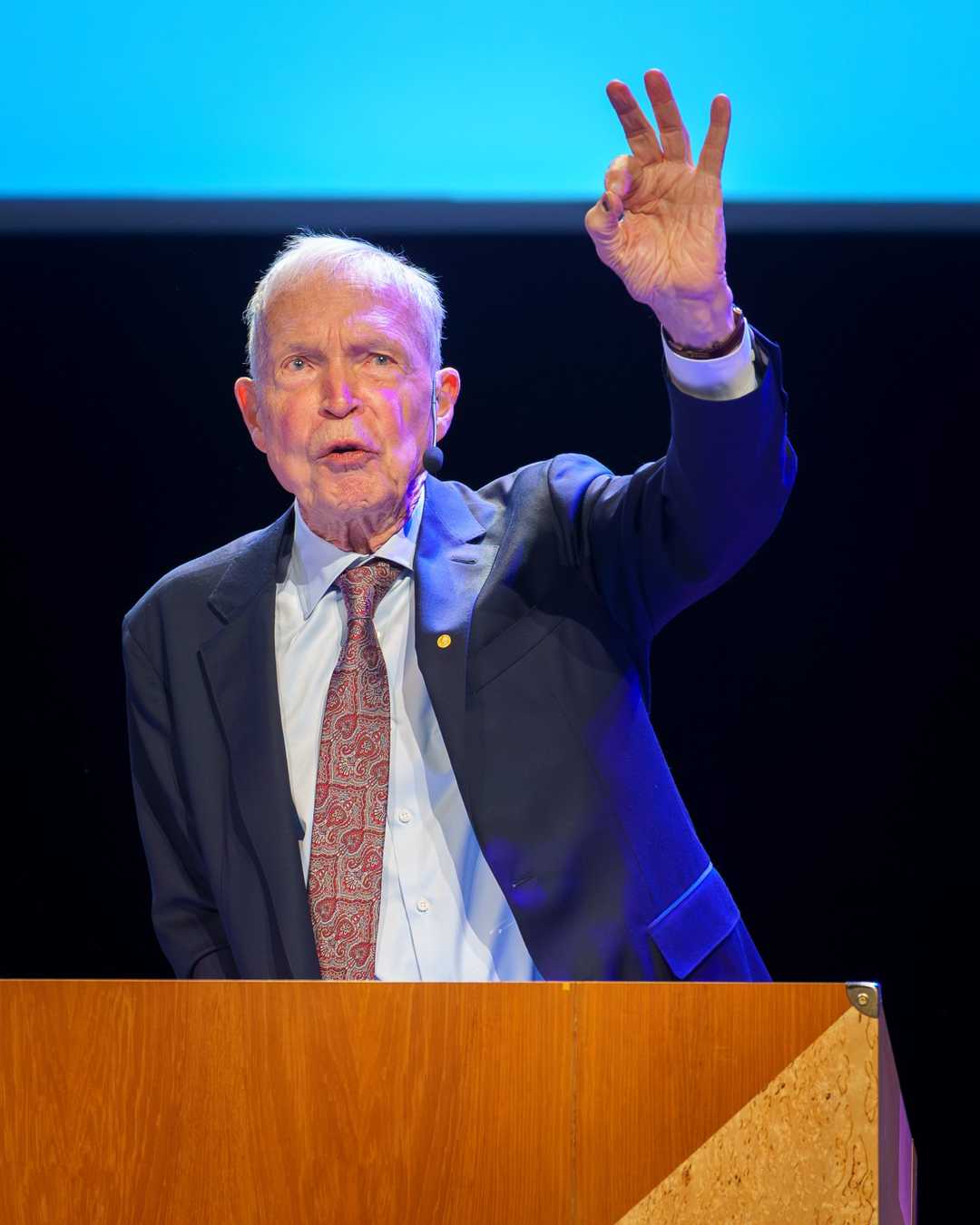

— Edward Shortliffe

In 1976, MYCIN, an early expert system developed at Stanford University, demonstrated the potential of artificial intelligence in medical diagnostics by outperforming many physicians in diagnosing bacterial infections and recommending antibiotic treatments.

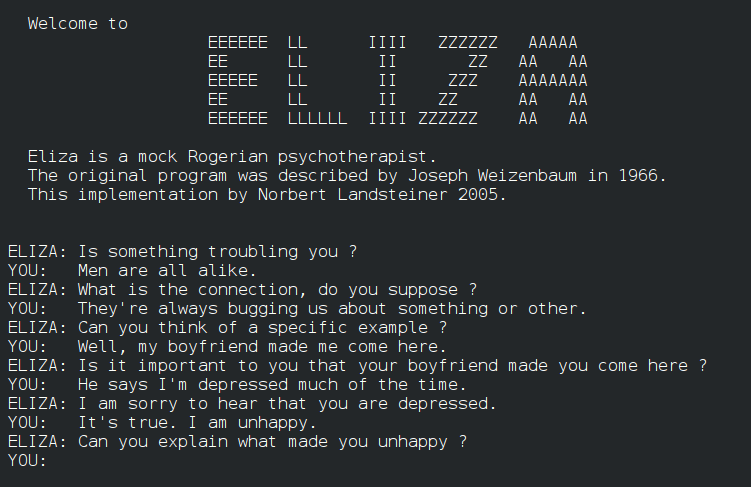

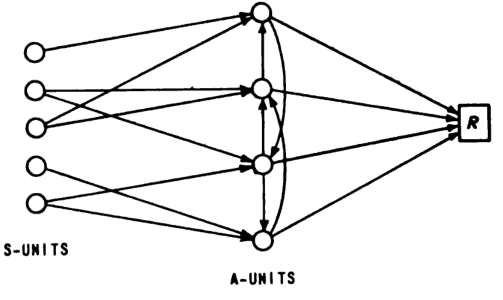

What happened: In 1976, Edward Shortliffe, under the direction of Bruce Buchanan and Stanley Cohen at Stanford University, completed his doctoral dissertation on MYCIN, an expert system designed to diagnose bacterial infections and recommend antibiotic treatments. MYCIN used a backward chaining approach and a knowledge base of medical rules to achieve a diagnostic accuracy rate of about 69%, surpassing many practicing physicians in blind evaluations. Despite its success, MYCIN was never deployed clinically due to concerns over liability and trust in AI-assisted medicine.

Why it matters: MYCIN’s success in 1976 marked a significant milestone in the field of artificial intelligence, particularly in expert systems. It showed that AI could match or even exceed human performance in specific medical domains, setting the stage for future developments in AI-assisted medical diagnostics. However, MYCIN also highlighted the challenges of integrating AI into clinical practice, including issues of liability and trust, which remain relevant today.

Further reading:

Why This Mattered

MYCIN demonstrated that rule-based expert systems could match or exceed human expert performance in narrow medical domains, correctly diagnosing bacterial infections and recommending antibiotics at a rate of roughly 69% — outperforming many practicing physicians in blind evaluations. Yet it was never deployed clinically, becoming an iconic case study in the gap between AI capability and real-world adoption, foreshadowing debates about liability, trust, and accountability in AI-assisted medicine that persist today.