The Physicist Who Gave Networks Memory

John Hopfield showed that a neural network could store and retrieve patterns like a physical system reaching equilibrium, reviving connectionism from its decade-long exile.

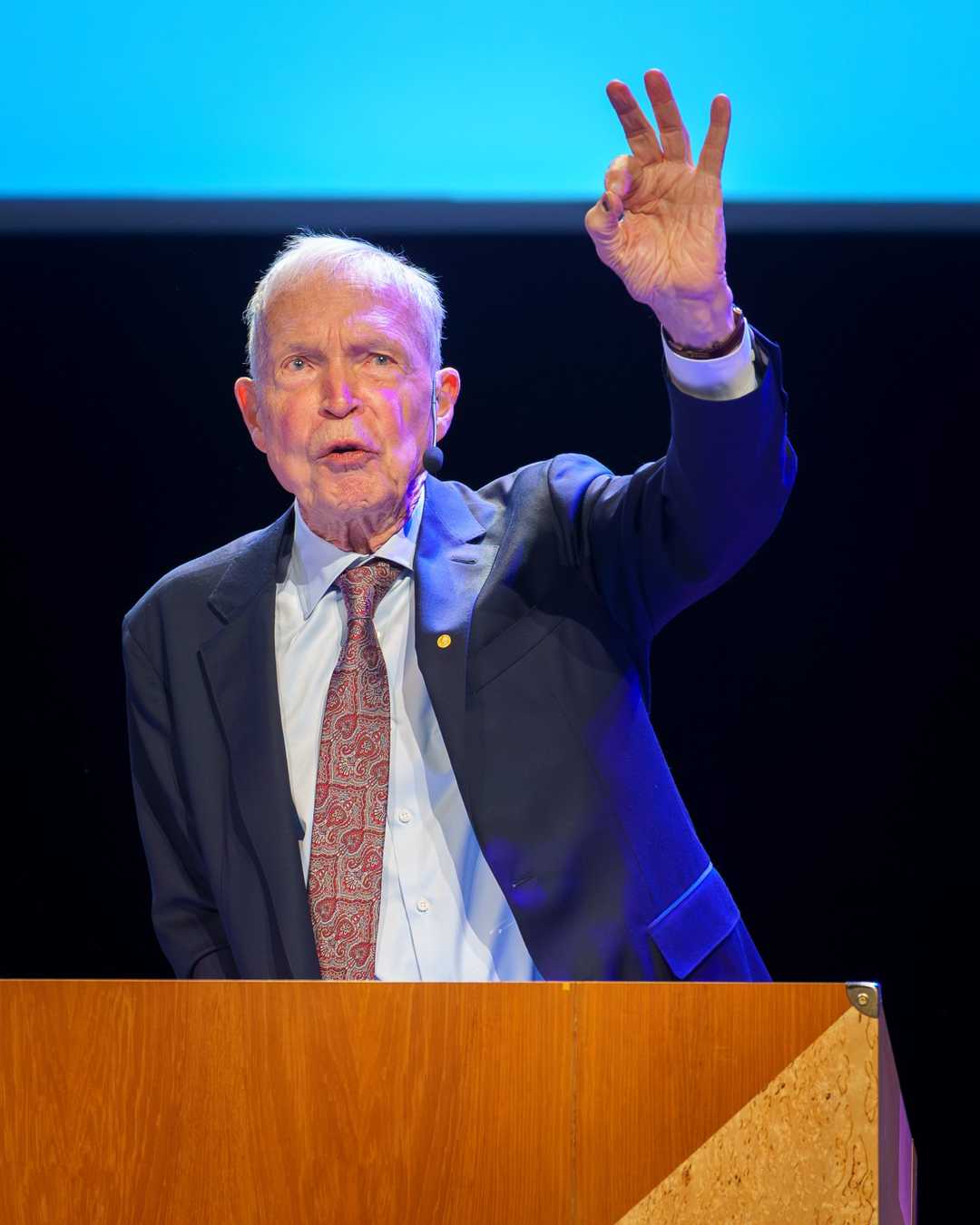

Jay Dixit / CC BY-SA 4.0

I was a physicist who wandered into biology, and then wandered from biology into computation. Each time I wandered, I found problems that were interesting.

— John Hopfield

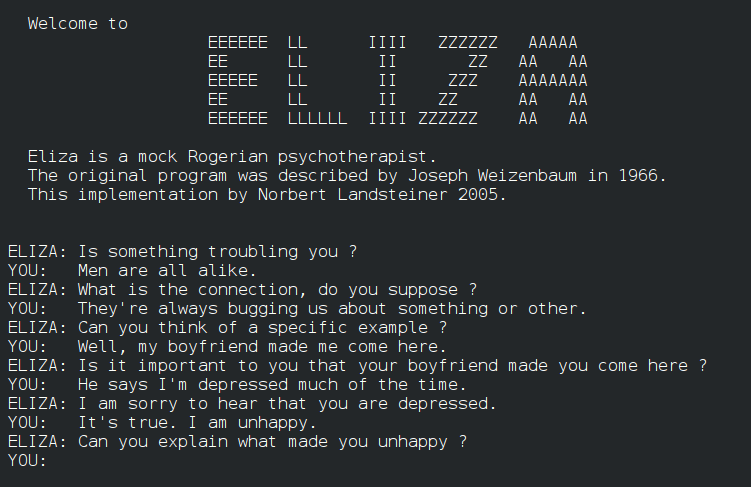

In 1982, physicist John Hopfield introduced a revolutionary concept in artificial intelligence: the Hopfield network, a type of recurrent neural network capable of associative memory.

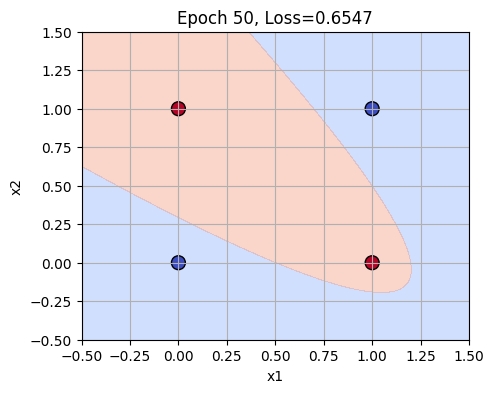

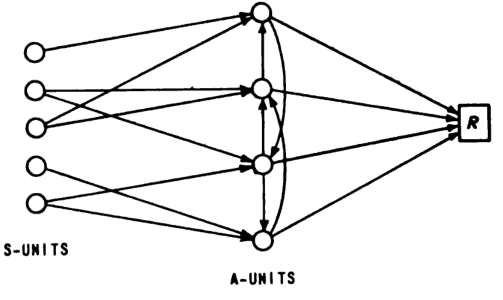

What happened: In 1982, John Hopfield published a paper introducing the Hopfield network, a neural network model that could store and recall patterns based on partial or noisy inputs. This network, which consists of a single layer of neurons connected in a fully recurrent manner, uses an energy function to stabilize patterns and recover them from incomplete data. Hopfield network - Wikipedia

Why it matters: The Hopfield network was significant because it demonstrated how principles from statistical physics could be applied to neural networks, leading to the development of Boltzmann machines and contributing to the resurgence of connectionist approaches in AI. This work laid foundational ideas for modern deep learning techniques. John Hopfield - Wikipedia

Further reading:

The Hopfield network’s ability to retrieve complete patterns from partial information has had a lasting impact on the field of artificial intelligence.

Why This Mattered

Hopfield networks demonstrated that ideas from statistical physics could explain how networks of simple units store memories as energy minima. The paper brought physicists flooding into neural network research and directly inspired Geoffrey Hinton and others to develop Boltzmann machines, helping reignite the connectionist movement that led to modern deep learning.