Learning in Reverse: The Backpropagation Breakthrough

A 1986 Nature paper showed neural networks how to learn by propagating errors backward, reviving a field that had been declared dead.

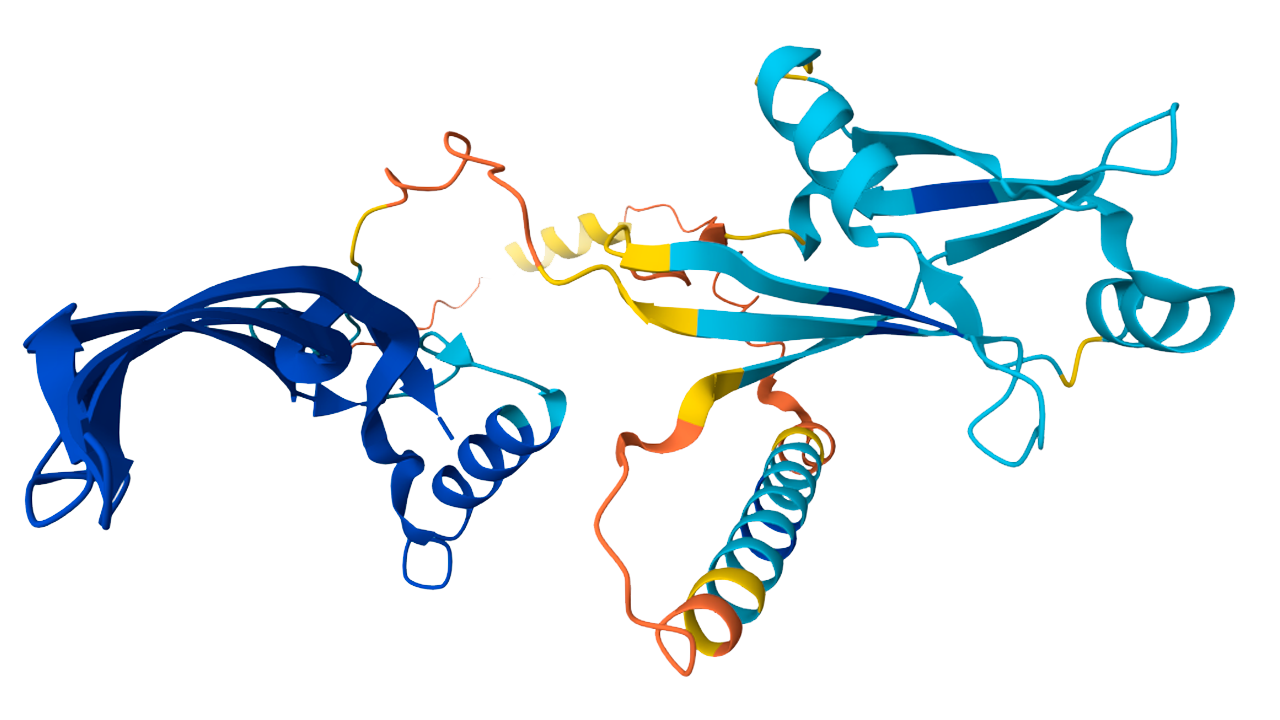

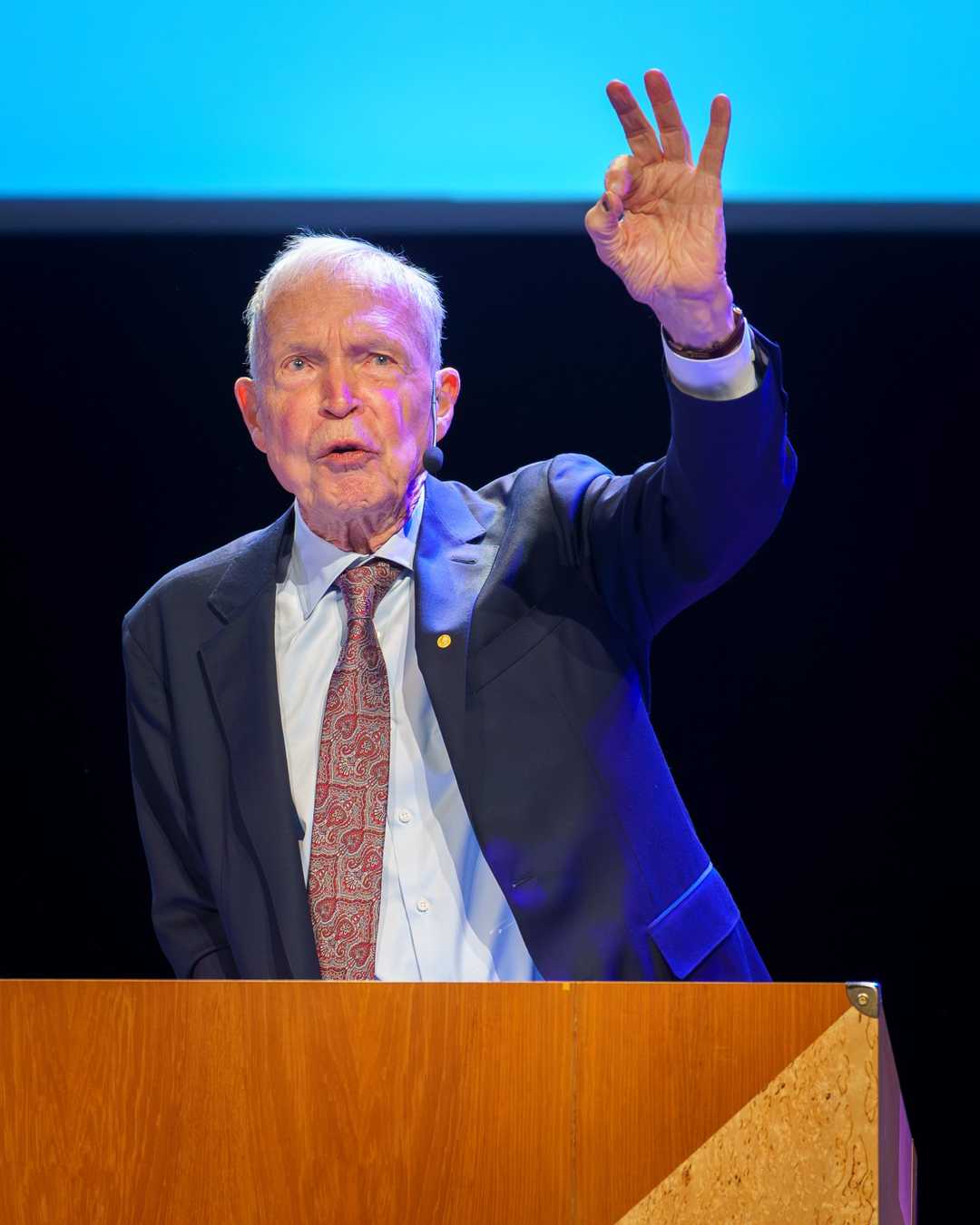

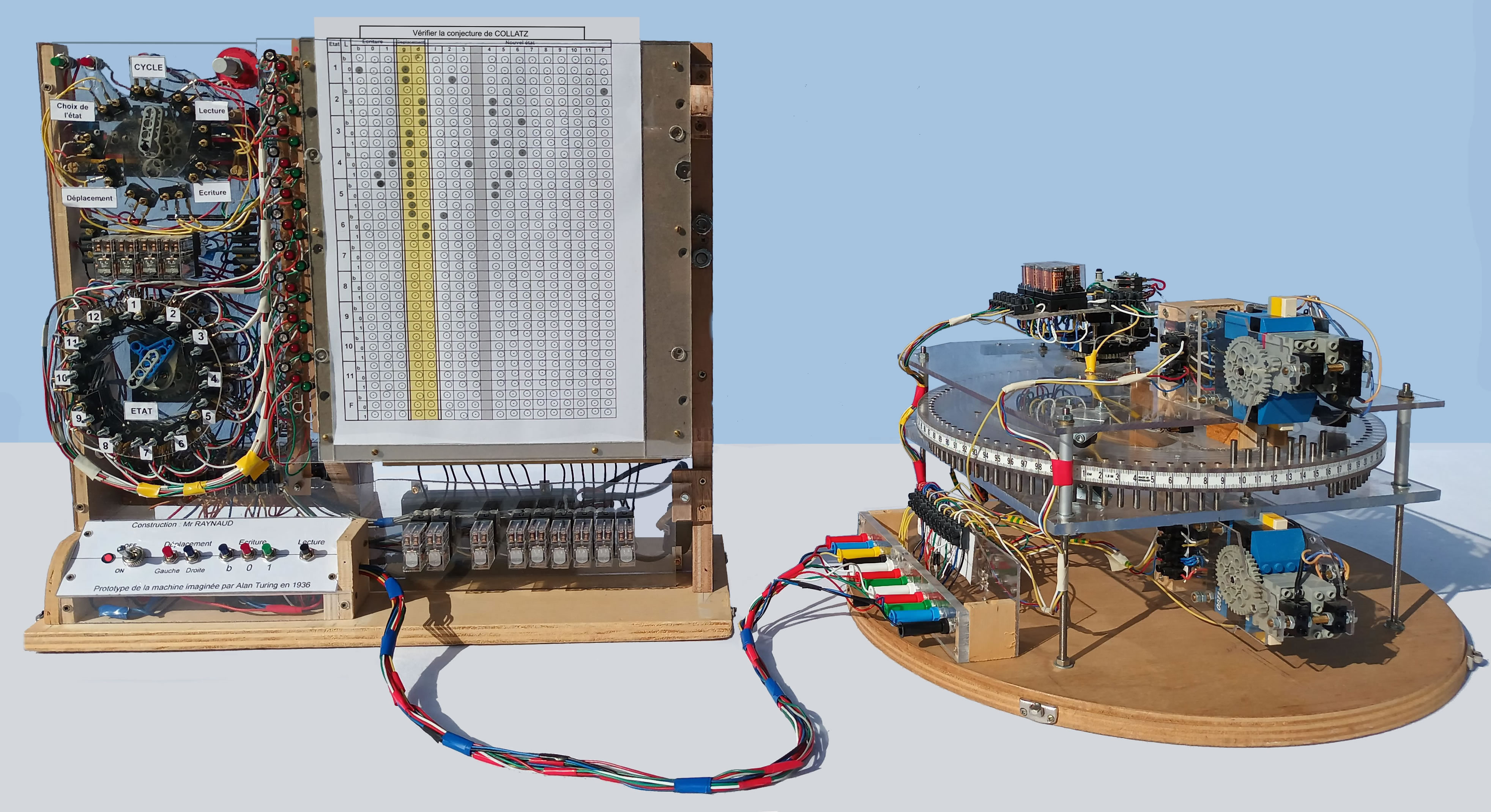

Tomasz59 / CC BY-SA 4.0

We describe a new learning procedure, back-propagation, for networks of neurone-like units. The procedure repeatedly adjusts the weights of the connections in the network so as to minimize a measure of the difference between the actual output vector of the net and the desired output vector.

— David Rumelhart, Geoffrey Hinton, Ronald Williams

Learning in Reverse: The Backpropagation Breakthrough (1986)

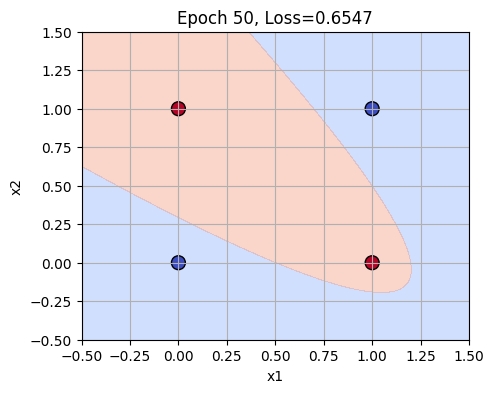

Backpropagation, introduced in 1986, revolutionized the field of artificial neural networks by making it practical to train multi-layer networks.

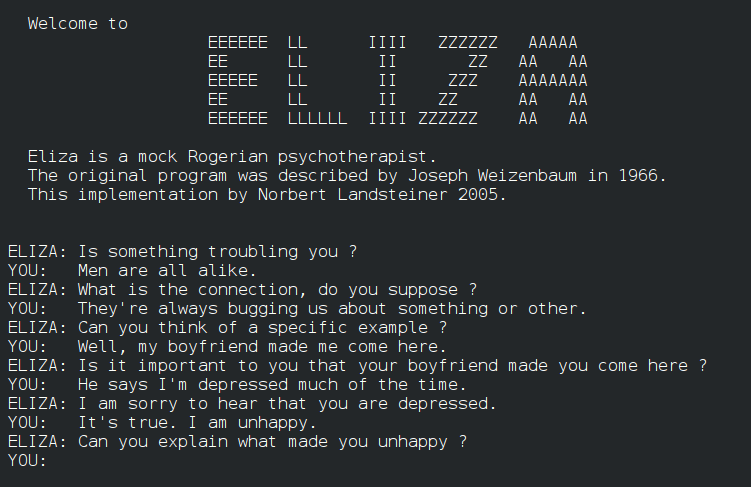

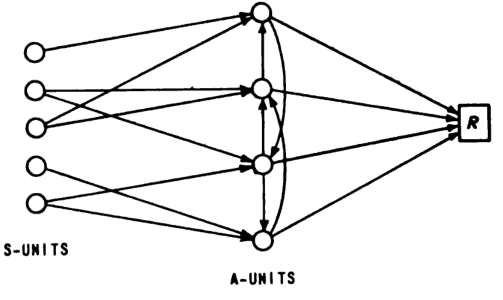

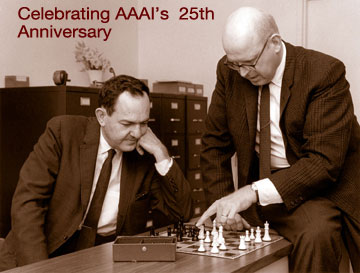

What happened: In 1986, David Rumelhart, Geoffrey Hinton, and Ronald Williams published a seminal paper in Nature, detailing the backpropagation algorithm, which efficiently computes the gradient of a loss function with respect to the weights of a neural network. This breakthrough solved the credit-assignment problem that had stalled the field since Minsky and Papert’s 1969 critique.

Why it matters: Backpropagation became the workhorse algorithm behind virtually every deep learning system that followed, from speech recognition to large language models, enabling the training of complex neural networks that power modern AI applications.

Further reading:

Why This Mattered

Backpropagation made it practical to train multi-layer neural networks, solving the credit-assignment problem that had stalled the field since Minsky and Papert's 1969 critique. It became the workhorse algorithm behind virtually every deep learning system that followed, from speech recognition to large language models.