The Network That Learned the Order of Things

Jeffrey Elman's simple recurrent network showed that neural networks could process sequences and learn grammar, opening the door to modern language AI.

The network is not given any explicit information about the structure of the sentences. It must discover this structure on its own.

— Jeffrey Elman

The Network That Learned the Order of Things (1990)

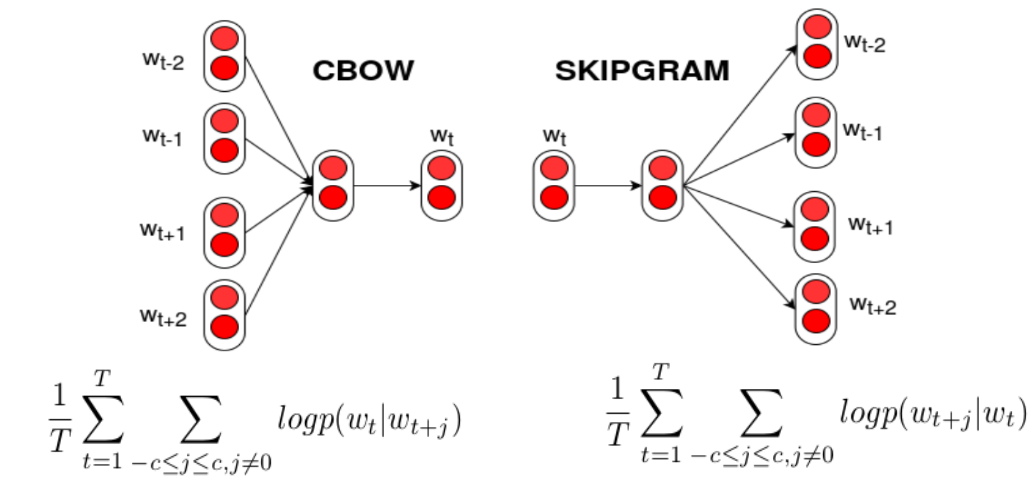

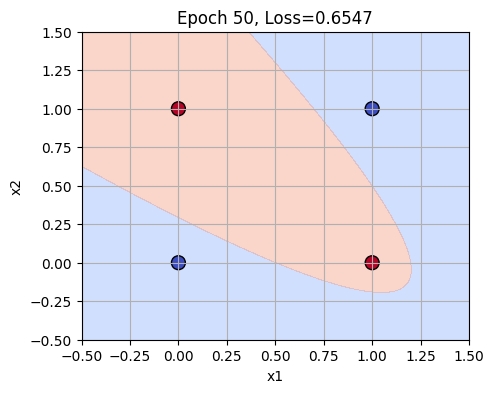

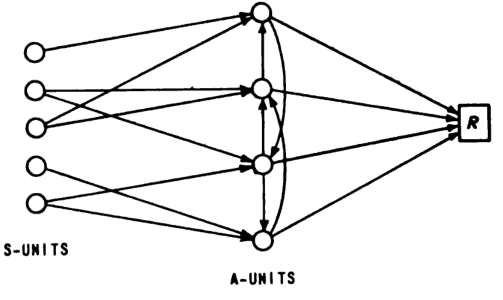

In 1990, cognitive scientist Jeffrey Elman introduced the simple recurrent network (Elman network), a groundbreaking model that could learn temporal patterns and basic grammatical structure from raw sequences.

What happened: In his seminal paper ‘Finding Structure in Time’, published in 1990, Jeffrey Elman presented the Elman network, a type of recurrent neural network capable of processing sequences and understanding temporal dependencies. This work laid the foundation for sequence modeling and influenced the development of modern recurrent networks used in language processing. Link to paper.

Why it matters: Elman’s contribution was pivotal in the field of artificial intelligence, as it enabled machines to better understand and generate human language by capturing the order and context of words. This breakthrough has had lasting impacts on natural language processing and continues to influence research in neural networks and machine learning.

Further reading:

Why This Mattered

Elman's 1990 paper 'Finding Structure in Time' introduced the simple recurrent network (Elman network), which could learn temporal patterns and basic grammatical structure from raw sequences. This architecture became foundational for sequence modeling and directly influenced the development of modern recurrent networks that power language processing.