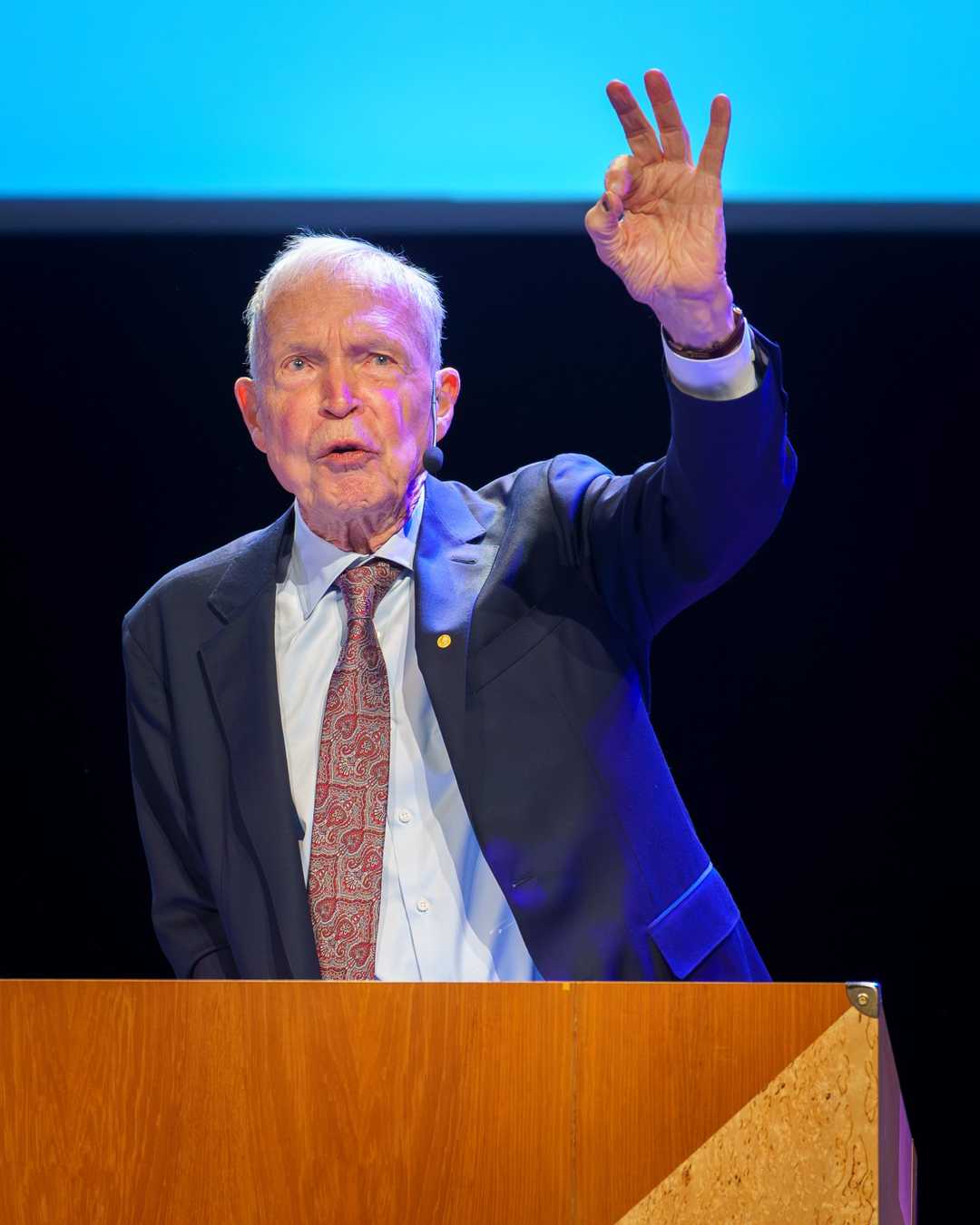

The Neural Network That Mastered Backgammon

Gerald Tesauro's TD-Gammon taught itself to play backgammon at world-champion level, proving neural networks could discover strategies humans never imagined.

TD-Gammon's playing style has actually changed the way the best human players play the game.

— Gerald Tesauro

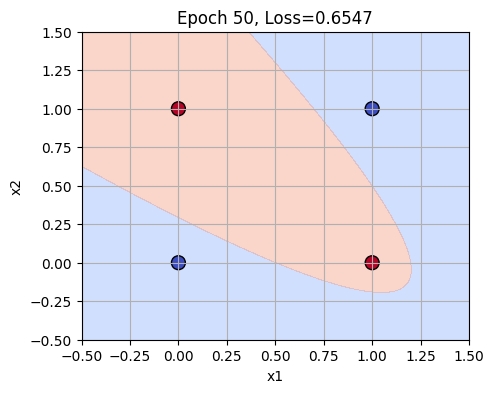

In 1992, Gerald Tesauro at IBM’s Thomas J. Watson Research Center developed TD-Gammon, a groundbreaking computer backgammon program that used artificial neural networks and temporal difference learning. Wikipedia — TD-Gammon explains how TD-Gammon achieved world-class play through self-play and introduced novel strategies that human experts adopted. By 1993, version 2.1 of TD-Gammon had played 1.5 million games and was nearly on par with top human players. Its success in backgammon demonstrated the potential of reinforcement learning and neural networks, influencing future AI developments like AlphaGo.

Why it matters: TD-Gammon’s achievement marked a significant milestone in AI, showing that complex games could be mastered through self-play and reinforcement learning. It not only improved backgammon strategy but also paved the way for advancements in machine learning and game AI.

Further reading:

TD-Gammon’s legacy is evident in the continued use of reinforcement learning in modern AI systems.

Why This Mattered

TD-Gammon was the first program to reach world-class play in a complex game entirely through self-play and temporal difference learning. It discovered novel strategies that changed how human experts played backgammon, and it demonstrated that reinforcement learning could scale — foreshadowing AlphaGo by over two decades.