The Elegant Margin That Dominated Machine Learning

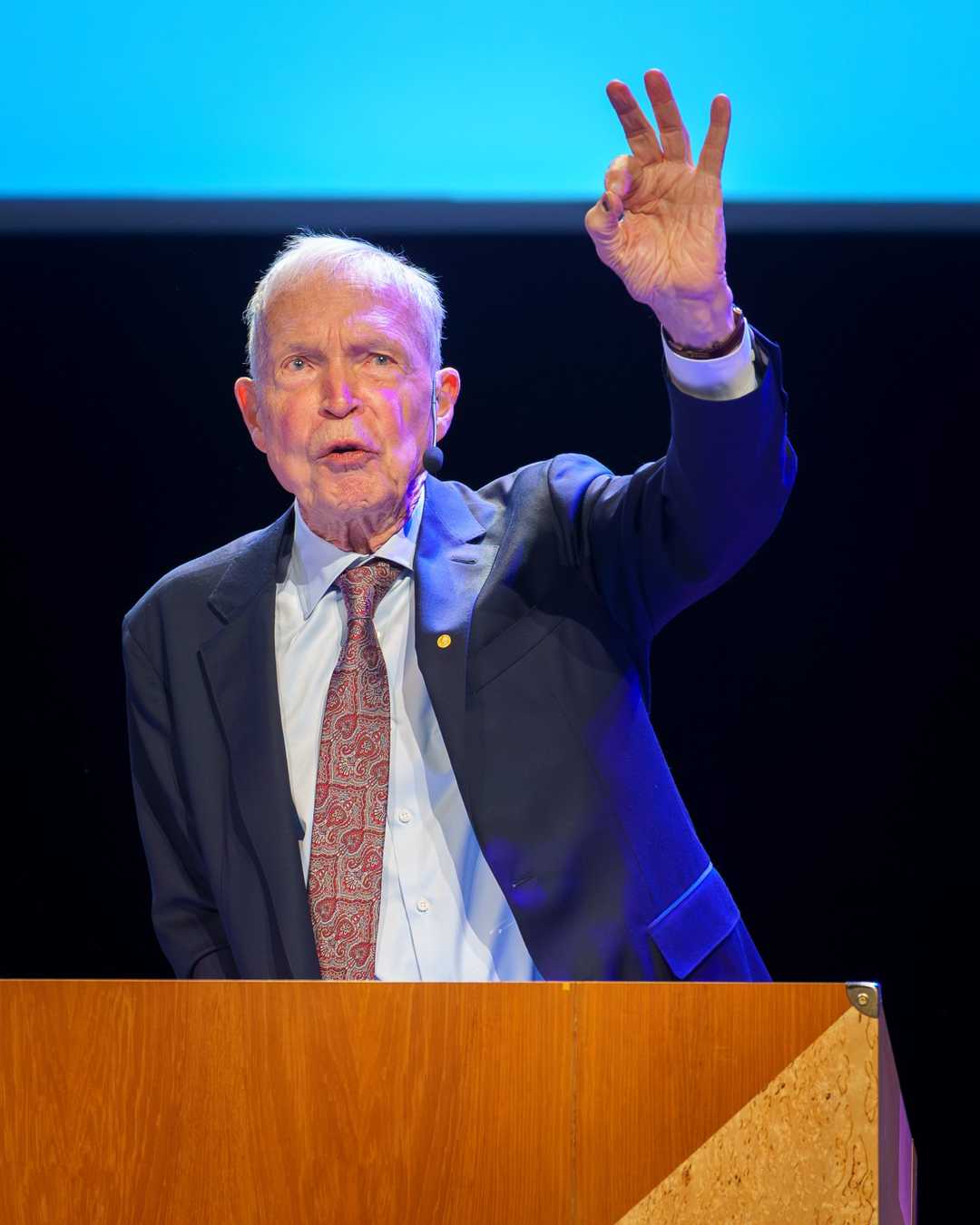

Vladimir Vapnik's Support Vector Machine became the most powerful classification algorithm of its era, quietly ruling AI for over a decade before deep learning took the crown.

In this business, the weights of the evidence are more important than any prior belief.

— Vladimir Vapnik

The Elegant Margin That Dominated Machine Learning (1995)

In 1995, Vladimir Vapnik and Corinna Cortes published a groundbreaking paper that introduced support vector machines (SVMs), a powerful tool in machine learning that revolutionized classification and regression analysis.

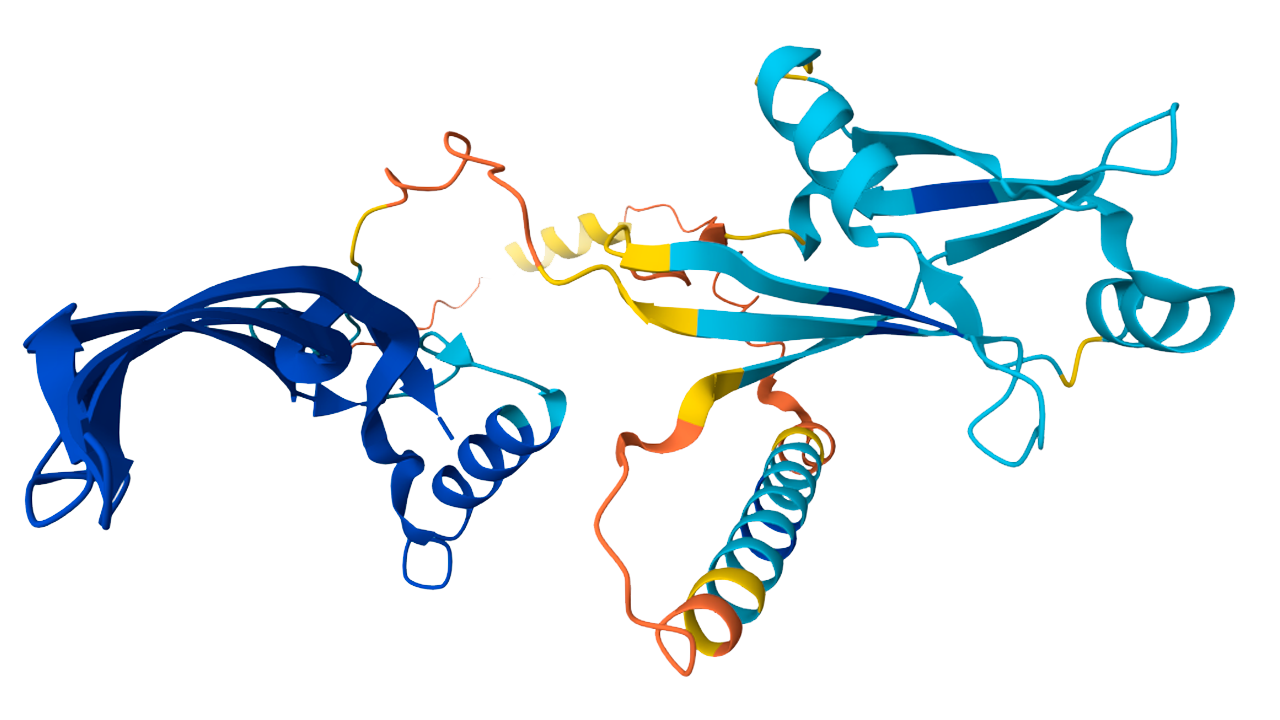

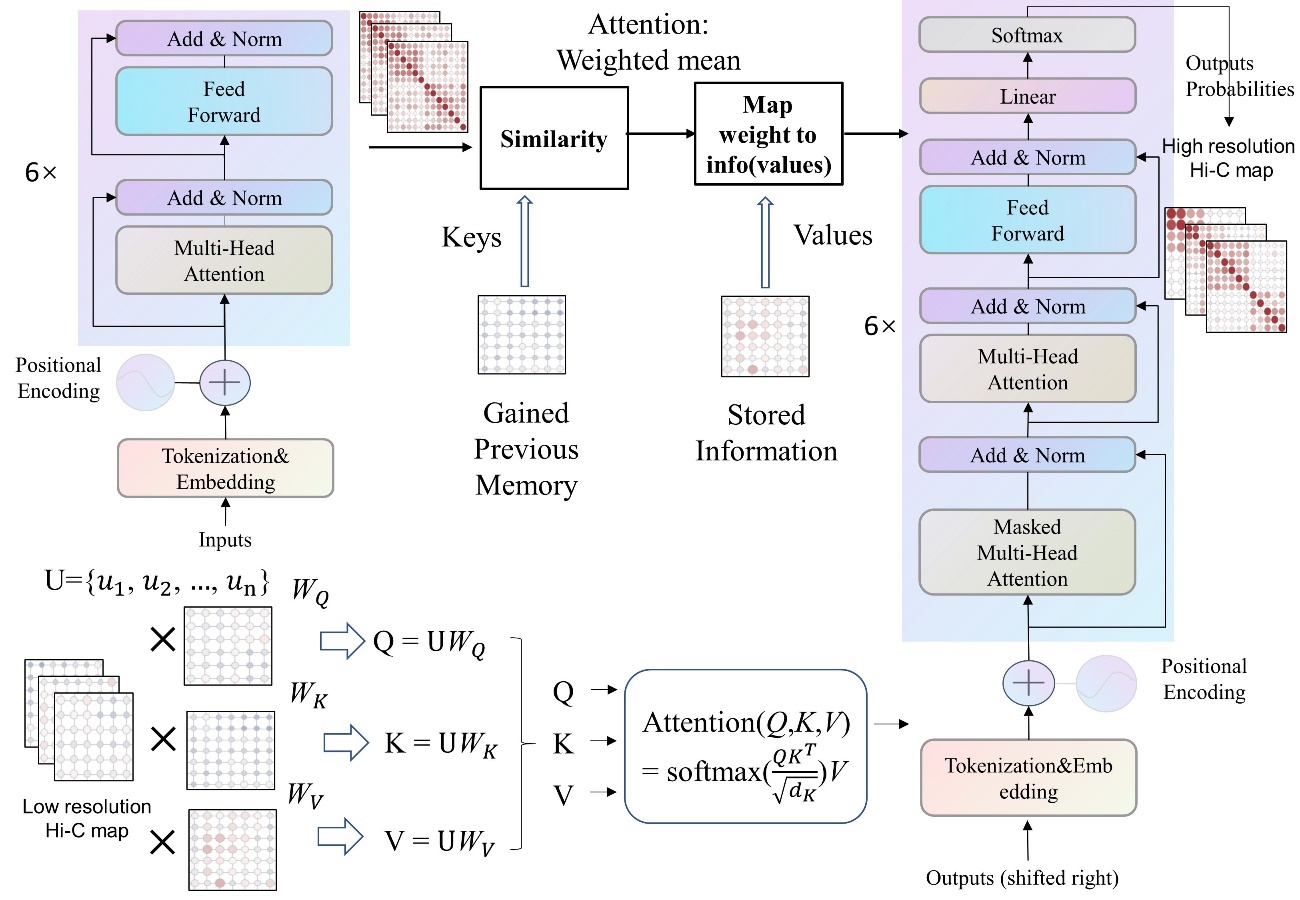

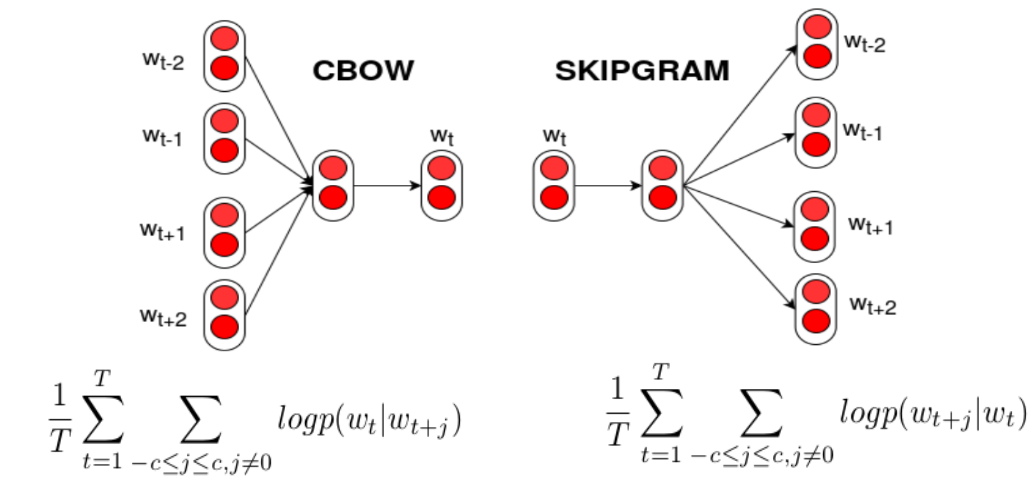

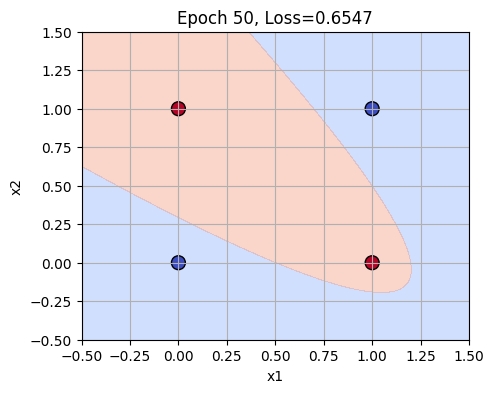

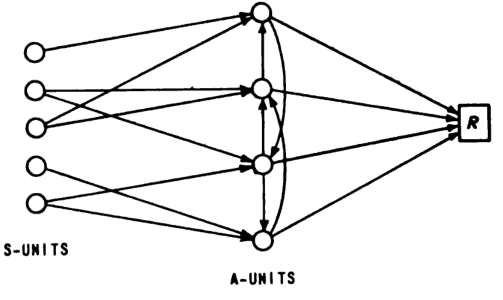

What happened: In 1995, Vladimir Vapnik and Corinna Cortes published their seminal work on support vector networks, which laid the foundation for support vector machines (SVMs). SVMs are supervised learning models that find the optimal separating hyperplane with maximum margin, a concept rooted in Vapnik’s VC theory. This method, combined with the ‘kernel trick’, enabled SVMs to handle non-linear data efficiently. Cortes & Vapnik, ‘Support-Vector Networks’.

Why it matters: SVMs dominated machine learning competitions and practical applications from the mid-1990s through the late 2000s, setting a high performance bar that deep learning eventually had to surpass. They demonstrated the practical utility of rigorous statistical theory, making SVMs a cornerstone of machine learning research and application.

Further reading:

SVMs’ elegance and effectiveness in handling complex data sets made them indispensable in various fields, from bioinformatics to finance, showcasing the profound impact of their introduction in 1995.

Why This Mattered

SVMs introduced the idea of finding the optimal separating hyperplane with maximum margin, combined with the 'kernel trick' to handle nonlinear data. They dominated machine learning competitions and practical applications from the mid-1990s through the late 2000s, proving that rigorous statistical theory could yield powerful practical tools — and setting the performance bar that deep learning eventually had to surpass.