The Memory That Saved Neural Networks

Two researchers in Munich published a paper solving the vanishing gradient problem, quietly laying the foundation for every modern AI that understands sequences.

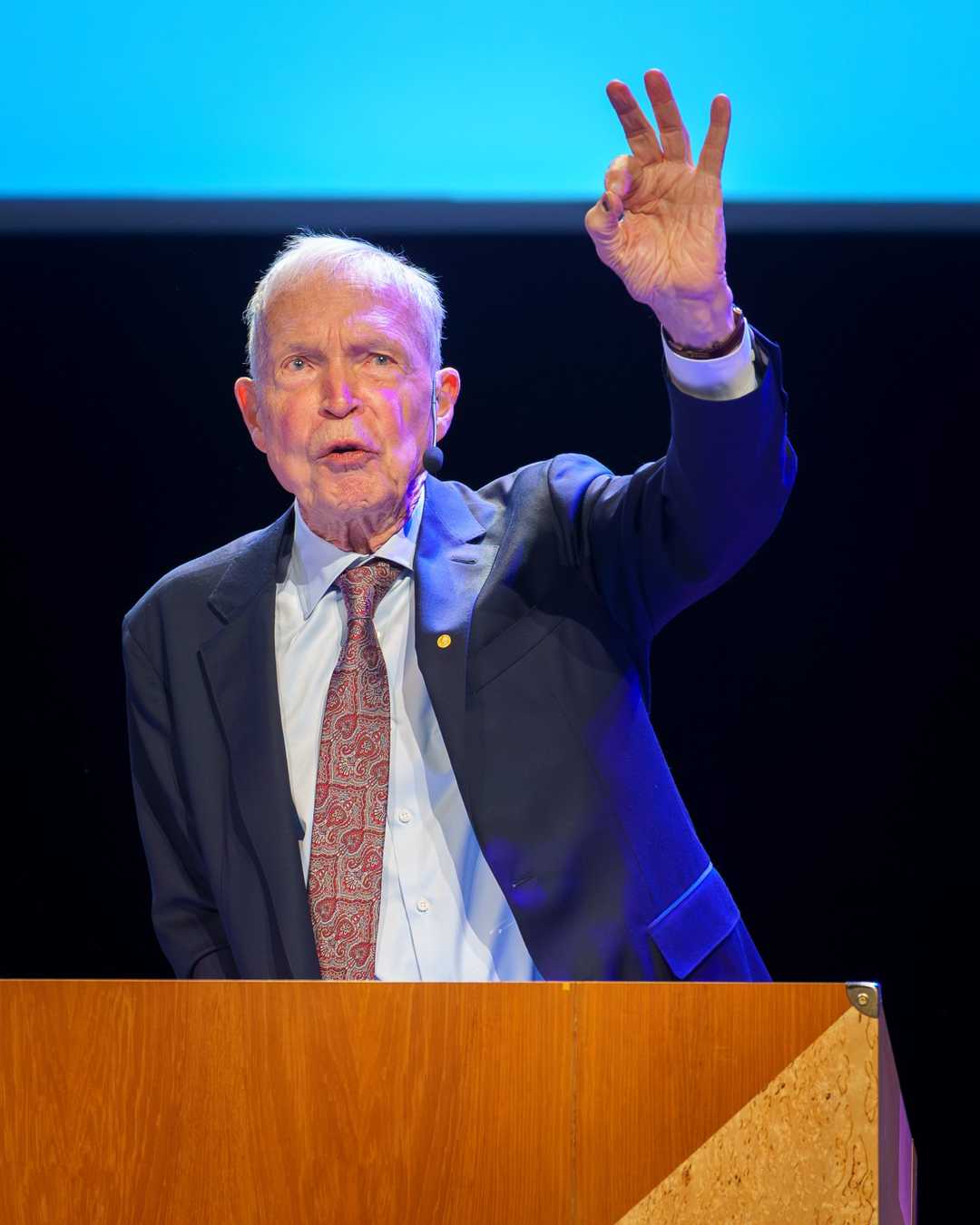

SeppHochreiter / CC BY-SA 4.0

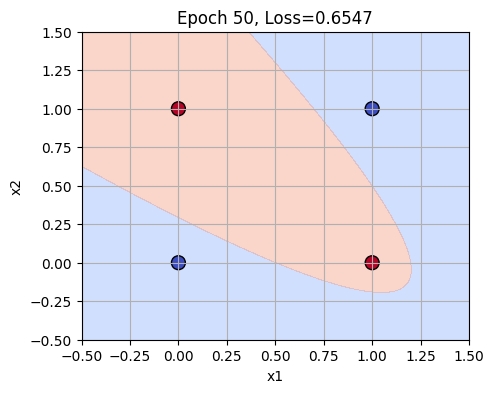

The problem is that backpropagated error signals either shrink or grow exponentially. This is the fundamental deep learning problem.

— Sepp Hochreiter

The Memory That Saved Neural Networks (1997)

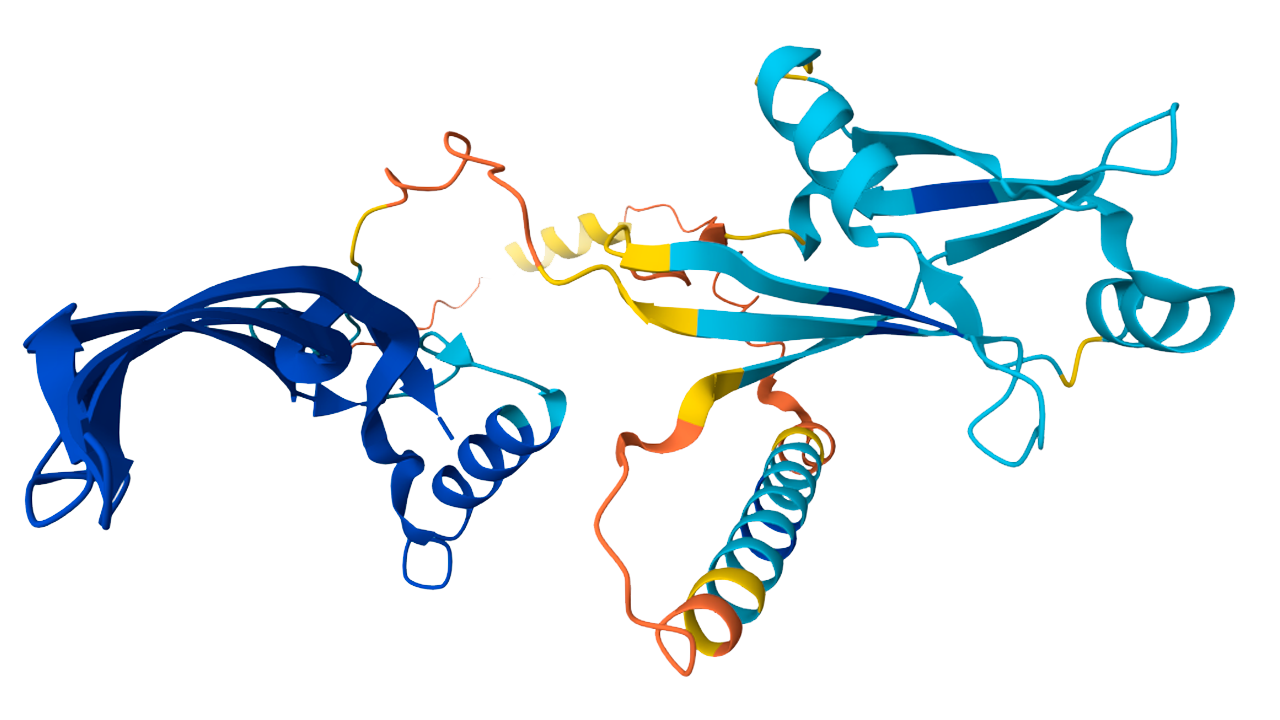

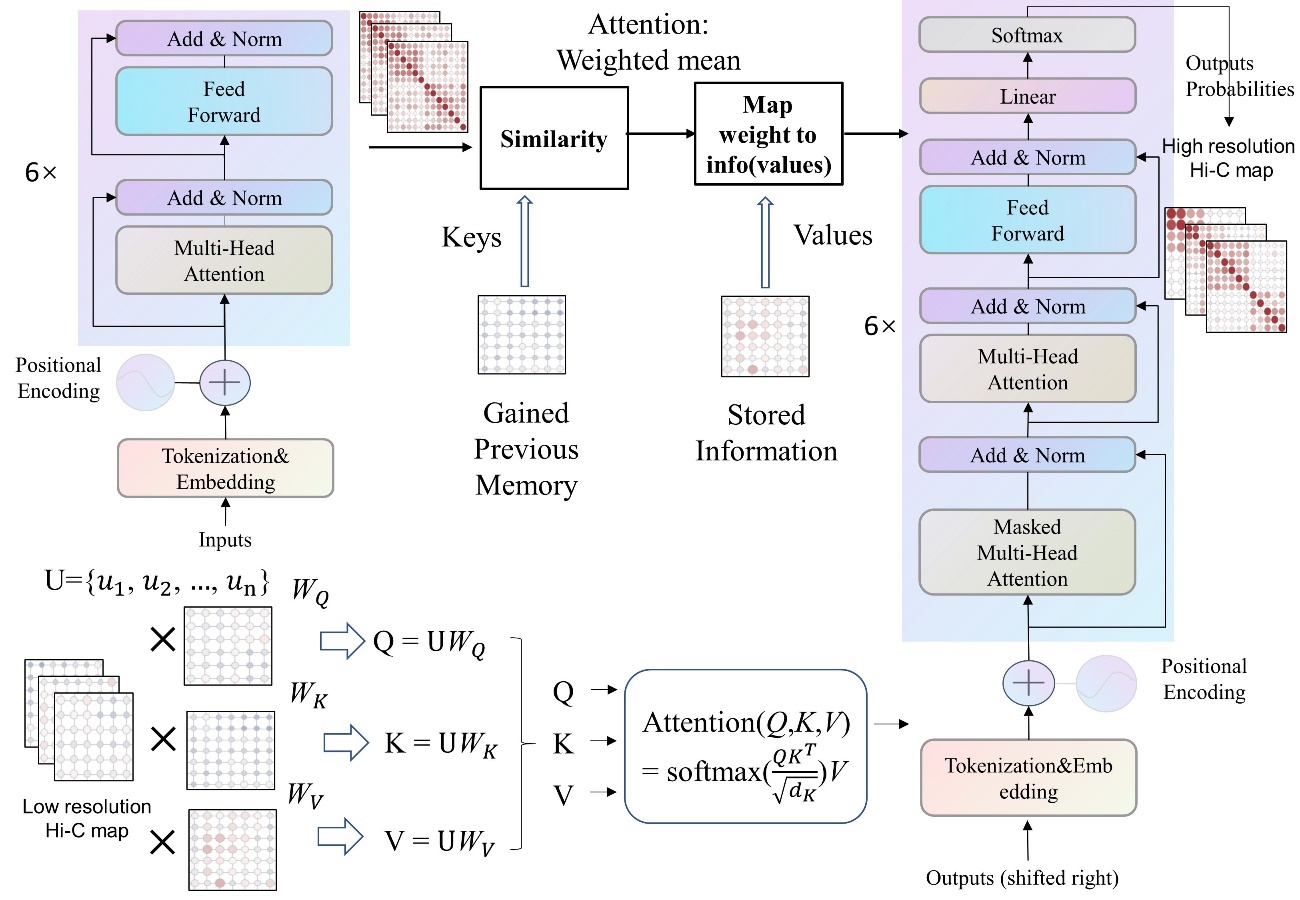

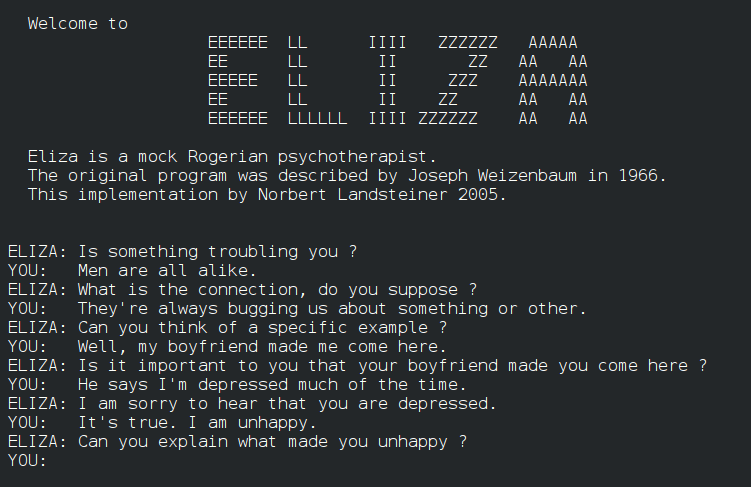

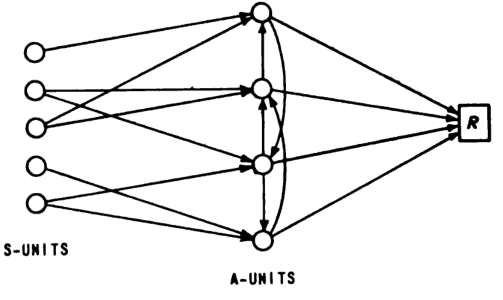

In 1997, Sepp Hochreiter and Jürgen Schmidhuber introduced Long Short-Term Memory (LSTM) networks, a type of recurrent neural network designed to address the vanishing gradient problem. LSTM units are composed of a cell and three gates: input, output, and forget gates, which regulate the flow of information and allow the network to remember information for long periods. This innovation was initially overlooked but eventually became crucial for applications like Google Translate and Siri’s speech recognition.

Why it matters: The introduction of LSTMs in 1997 marked a significant advancement in artificial intelligence, particularly in handling sequential data with long-range dependencies. Despite being ignored for over a decade, LSTMs revolutionized sequence modeling and dominated the field until the advent of transformers in 2017.

Further reading:

Why This Mattered

Long Short-Term Memory networks solved the critical problem of learning long-range dependencies in sequential data. Ignored for over a decade, LSTMs would eventually power Google Translate, Siri's speech recognition, and countless other applications — becoming the dominant architecture for sequence modeling until transformers arrived in 2017.