The Wisdom of a Thousand Trees

Leo Breiman published the Random Forest algorithm, proving that an ensemble of weak, randomized decision trees could outperform the most sophisticated single classifiers.

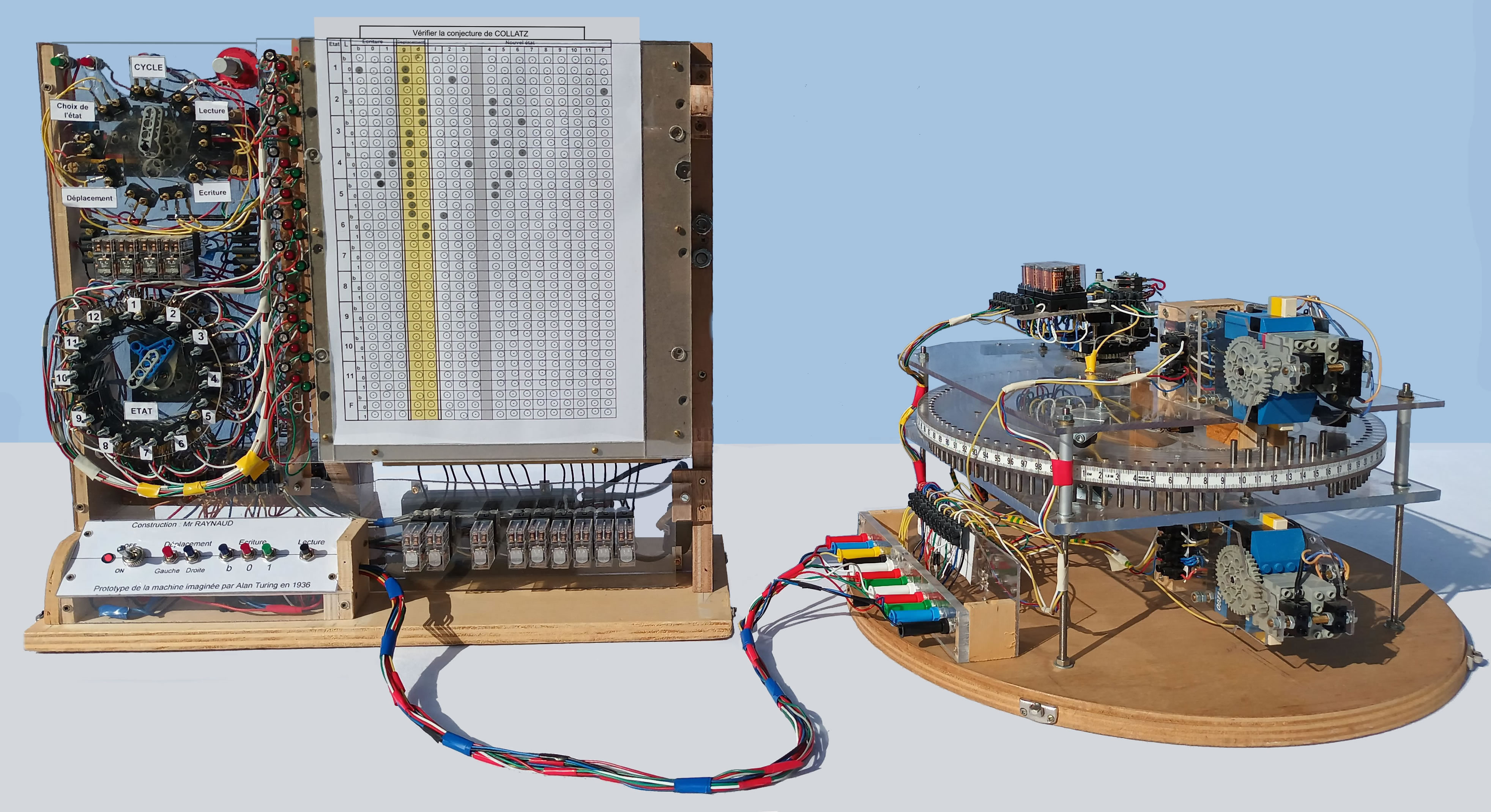

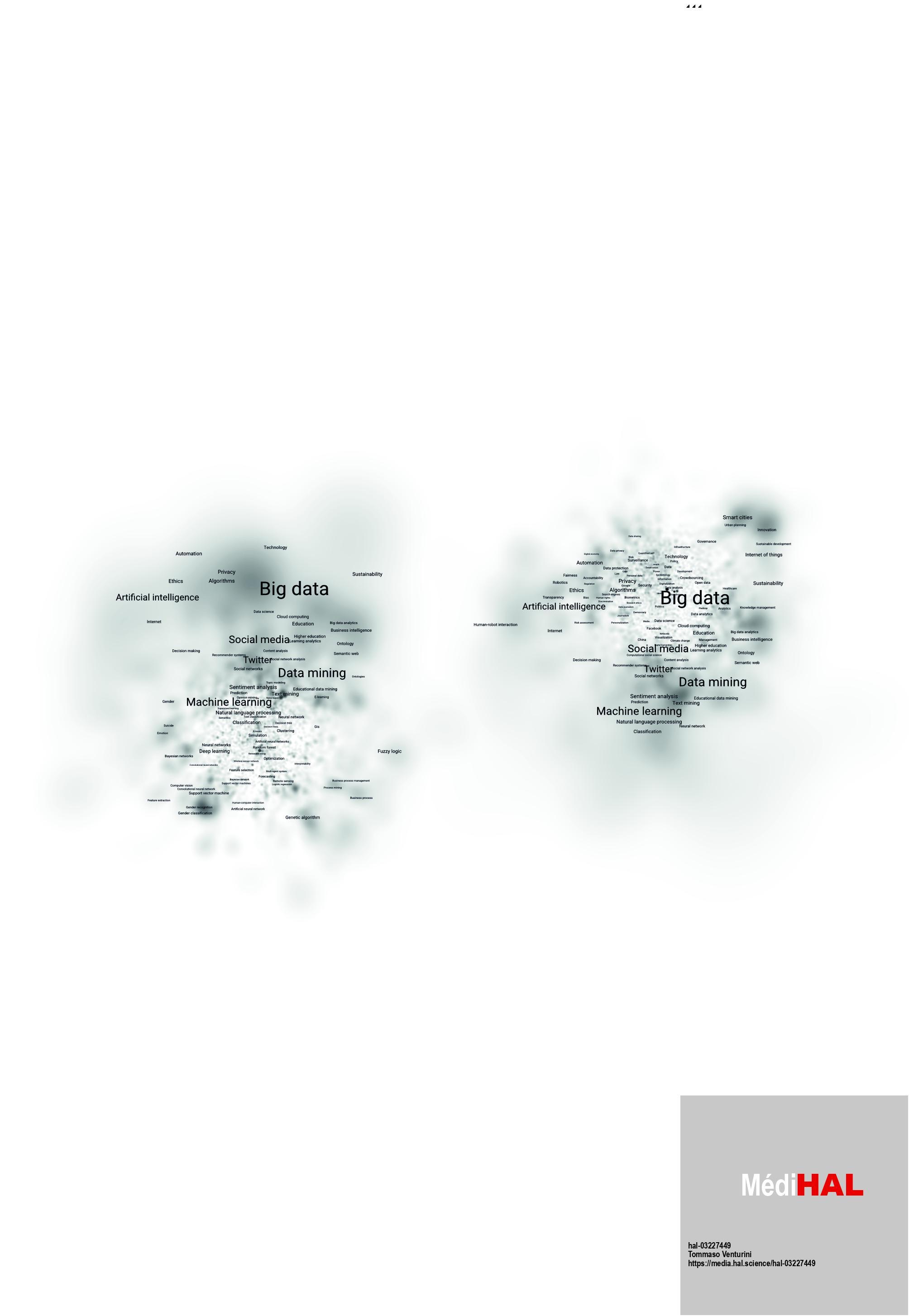

Tommaso Venturini / National Center for Scientific Research / CC BY-SA 4.0

There are two cultures in the use of statistical modeling to reach conclusions from data. One assumes that the data are generated by a given stochastic data model. The other uses algorithmic models and treats the data mechanism as unknown.

— Leo Breiman

The Wisdom of a Thousand Trees (2001)

Random Forests, introduced in 2001 by statistician Leo Breiman, revolutionized machine learning by offering a robust and accurate method for classification and regression tasks.

What happened: In 2001, Leo Breiman published the seminal paper ‘Random Forests’ in Machine Learning, introducing an ensemble learning method that combines multiple decision trees to improve predictive accuracy and control overfitting. This method builds on earlier work by Tin Kam Ho, who developed the random subspace method in 1995. Breiman’s extension, which trademarked the term ‘Random Forests’ in 2006, became a cornerstone of machine learning, widely adopted in both scientific research and industry applications.

Why it matters: The introduction of Random Forests marked a significant shift in the philosophy of machine learning, emphasizing predictive accuracy over model interpretability. This approach anticipated the deep learning era, where complex models often outperform simpler, more interpretable ones. The algorithm’s ability to handle large datasets and noisy data made it indispensable for a wide range of applications, from medical diagnostics to financial forecasting.

Further reading:

Why This Mattered

Random Forests became one of the most widely used machine learning algorithms in science and industry, prized for their accuracy, resistance to overfitting, and ability to handle messy real-world data. The method embodied Breiman's broader argument that predictive accuracy mattered more than interpretable models — a philosophical stance that anticipated the deep learning era.