The Paper That Brought Neural Networks Back from the Dead

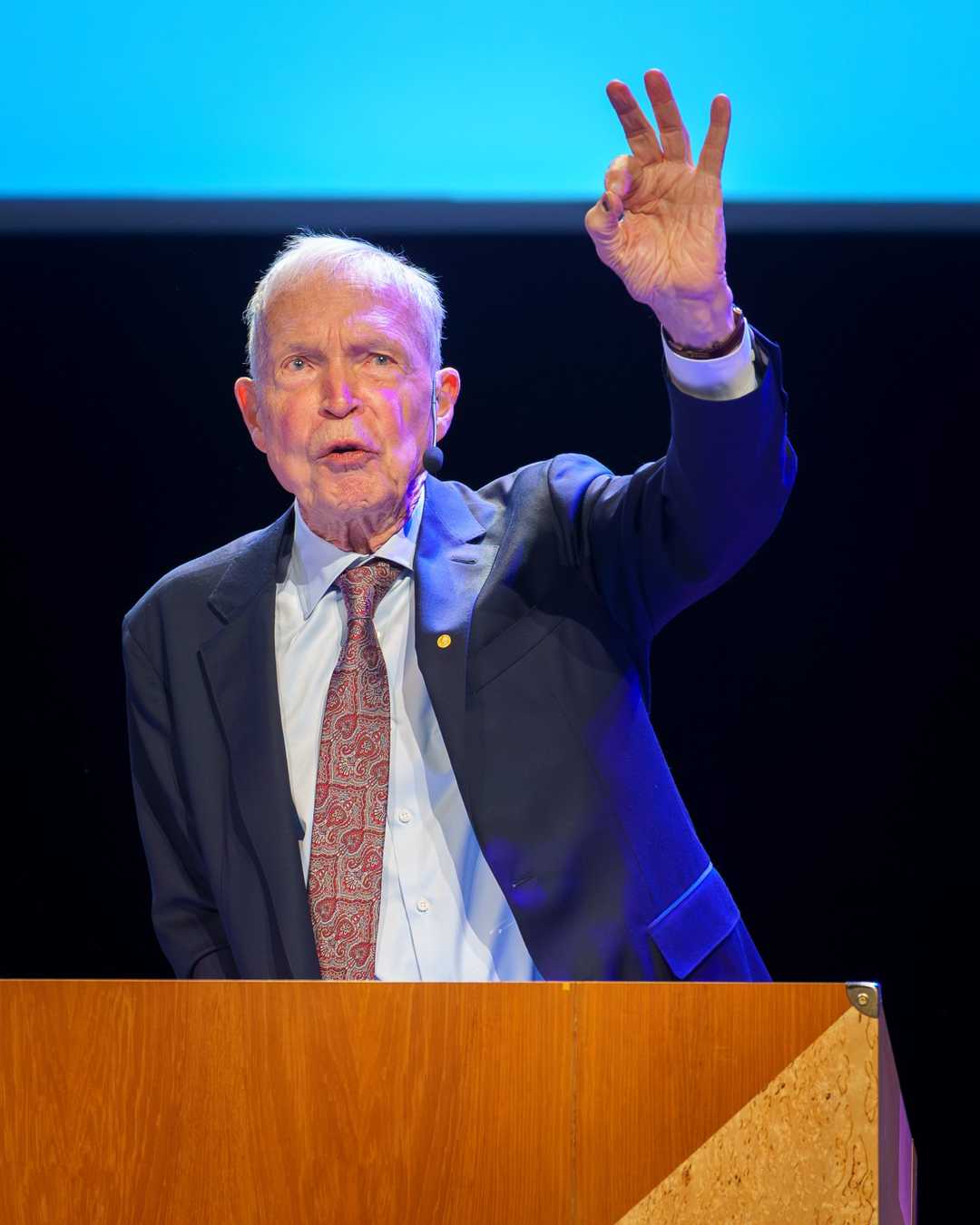

Geoffrey Hinton showed that deep neural networks could be trained layer by layer, reigniting a field that had been written off for decades.

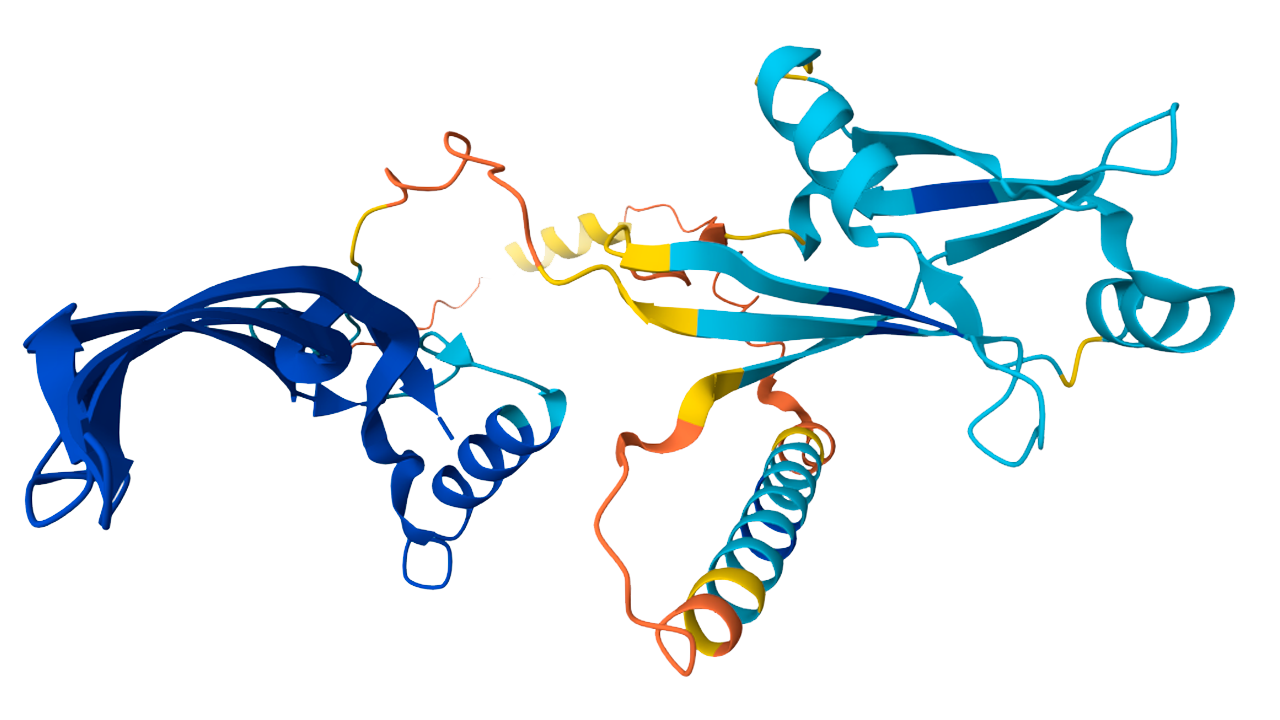

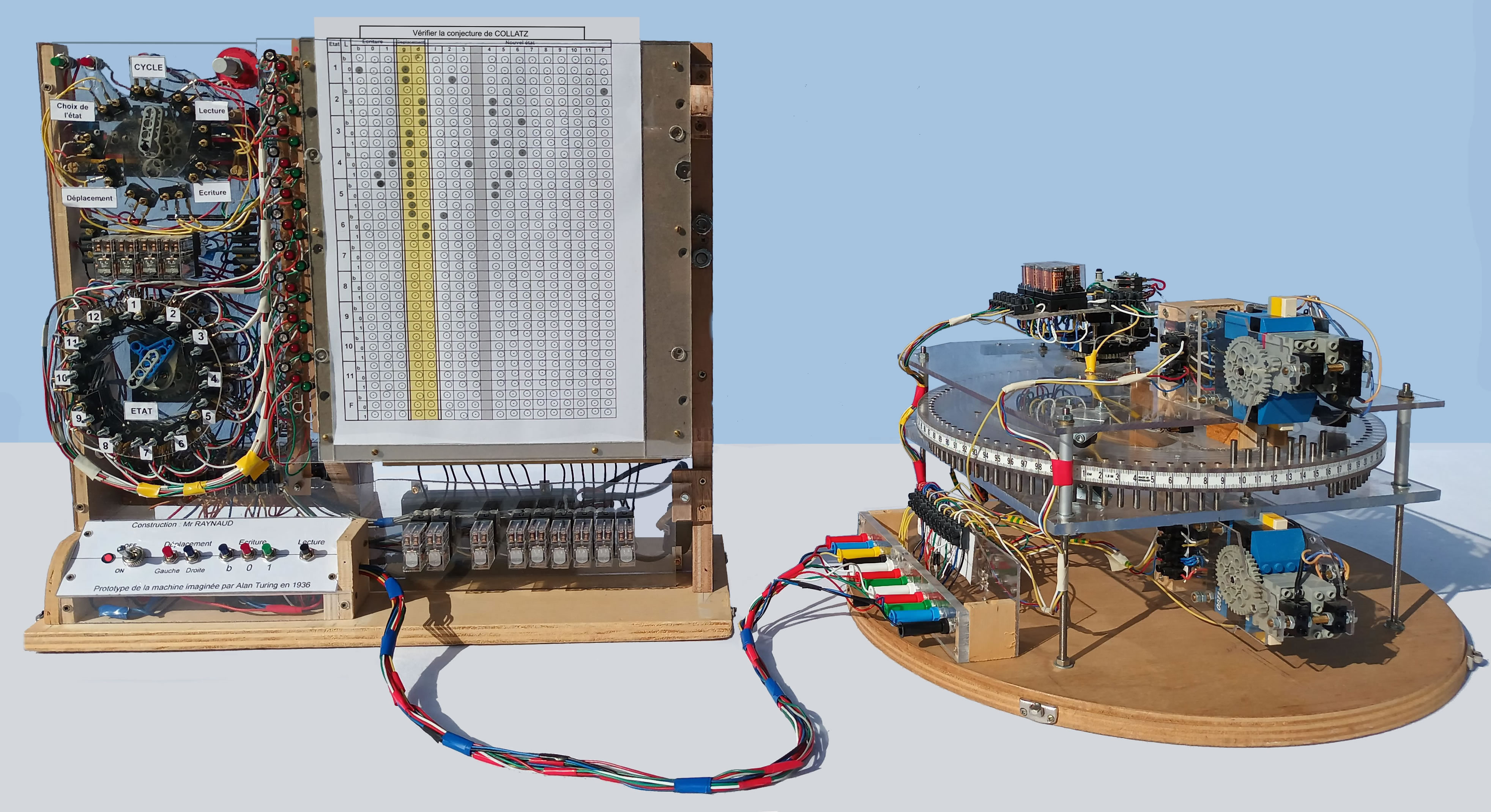

Eviatar Bach / CC BY-SA 3.0

We discover a fast, greedy algorithm that can learn deep, directed belief networks one layer at a time, provided the top two layers form an undirected associative memory.

— Geoffrey Hinton, Simon Osindero, and Yee-Whye Teh

The Paper That Brought Neural Networks Back from the Dead (2006)

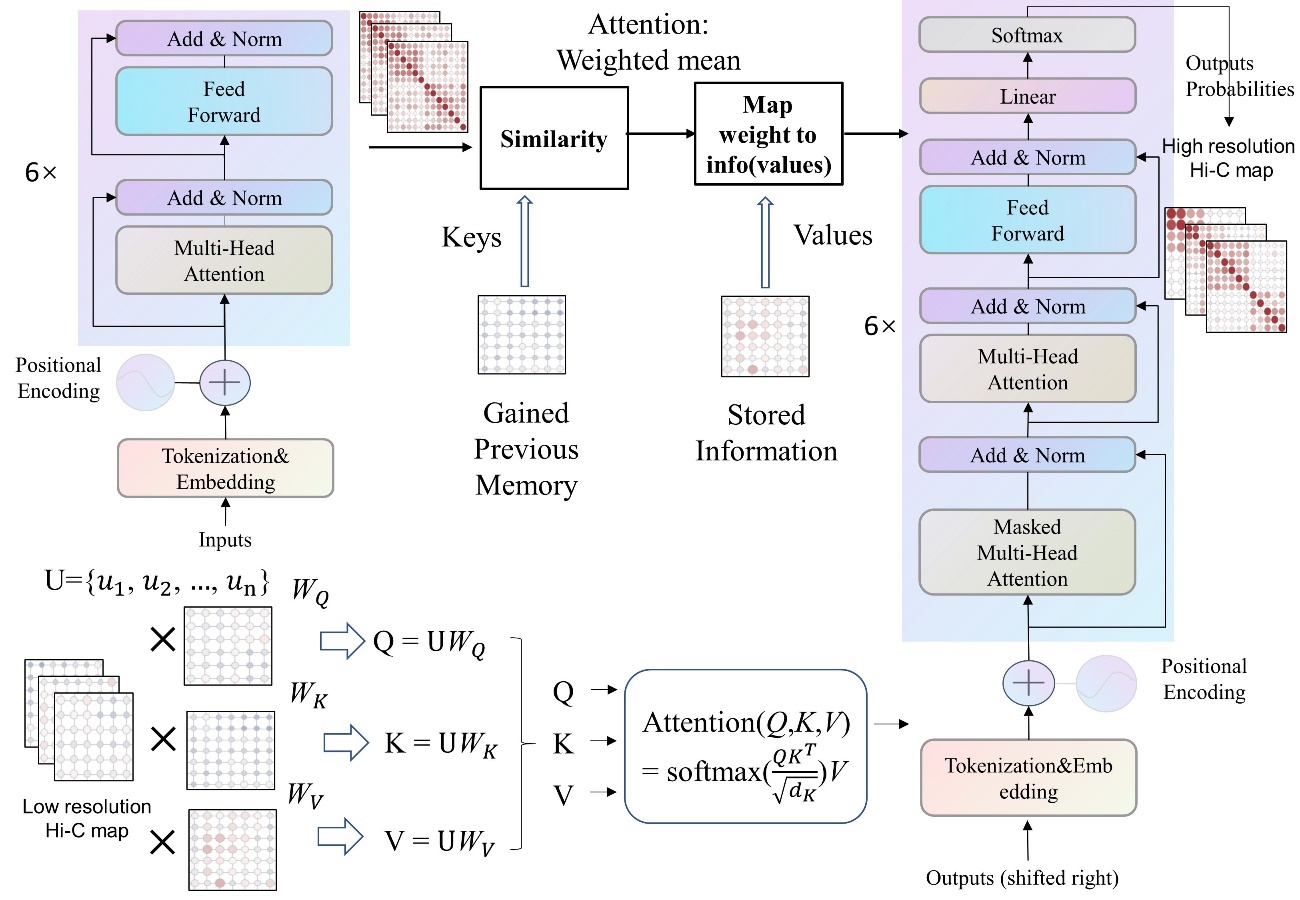

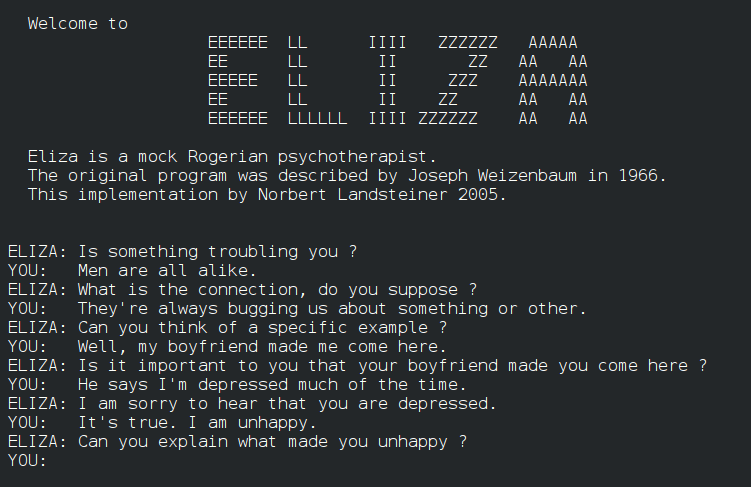

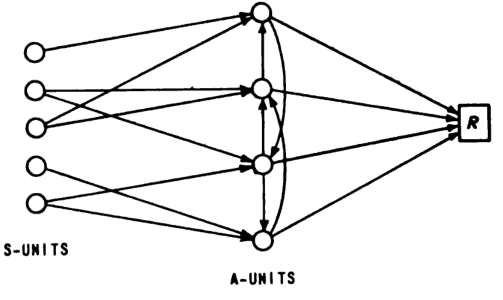

In 2006, Geoffrey Hinton, Simon Osindero, and Yee-Whye Teh published a groundbreaking paper that revitalized the field of deep learning and neural networks. What happened: In 2006, Geoffrey Hinton, Simon Osindero, and Yee-Whye Teh published a paper titled A Fast Learning Algorithm for Deep Belief Nets in Neural Computation, which introduced deep belief networks (DBNs) and demonstrated that deep architectures could be effectively trained using greedy layer-wise pretraining. This work was pivotal in overturning the prevailing belief that deep neural networks were impractical and untrainable. Link to paper Why it matters: This paper marked a turning point in the history of artificial intelligence, as it showed that deep learning models could achieve state-of-the-art performance on various tasks. The introduction of DBNs paved the way for the development of more sophisticated deep learning architectures and algorithms, leading to significant advancements in machine learning and AI. Link to Wikipedia entry on DBNs Further reading:

Why This Mattered

By 2006, neural networks were considered a dead end by most of the machine learning community. Hinton's deep belief networks paper demonstrated that networks with many layers could be effectively trained using greedy layer-wise pretraining, shattering the conventional wisdom that deep architectures were intractable. This single result revived an entire research paradigm and set the stage for the deep learning revolution that would transform AI within a decade.