The Algorithm That Mastered Atari by Itself

A small London startup showed that a single neural network could learn to play dozens of video games from raw pixels alone, igniting the deep reinforcement learning revolution.

The idea that you could take raw pixels, feed them into a neural network, and out would pop actions that would lead to superhuman performance — that was the dream of AI from the very beginning.

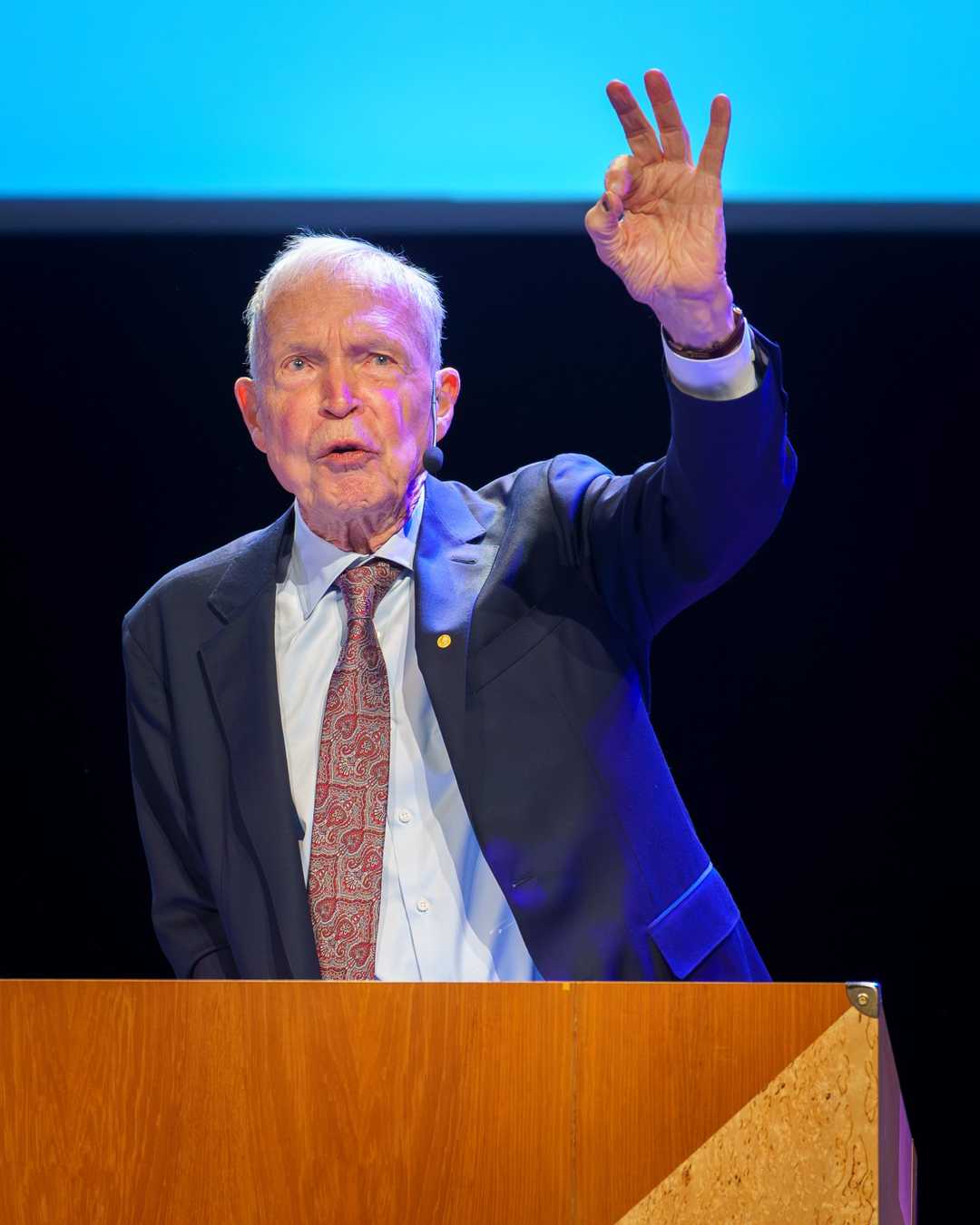

— Demis Hassabis

The Algorithm That Mastered Atari by Itself (2013)

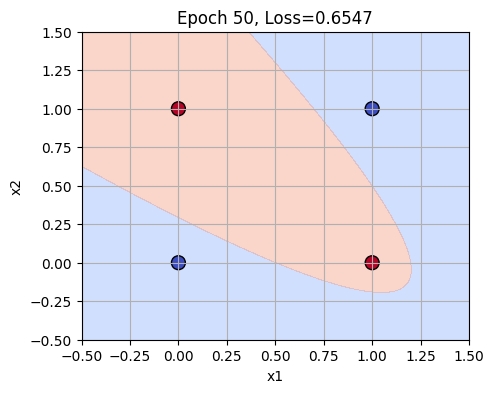

In 2013, DeepMind Technologies introduced the Deep Q-Network (DQN), a groundbreaking algorithm that mastered Atari games using raw pixel inputs without any game-specific programming.

What happened: In 2013, DeepMind’s researchers Volodymyr Mnih, Koray Kavukcuoglu, David Silver, and Demis Hassabis developed the Deep Q-Network (DQN), which combined deep learning with reinforcement learning to achieve superhuman performance in Atari 2600 games. This was a significant milestone in artificial intelligence, demonstrating that a single architecture could master diverse tasks from raw sensory input. Deep Q-Network Playing Atari with Deep Reinforcement Learning.

Why it matters: The DQN algorithm paved the way for more advanced AI systems like AlphaGo, which later defeated a world champion in the game of Go. This work proved that deep reinforcement learning could be applied to complex, real-world problems, setting the stage for modern AI agents that can learn from experience and adapt to new challenges. Google acquired DeepMind for over $500 million shortly after this breakthrough.

Further reading:

Why This Mattered

DeepMind's Deep Q-Network (DQN) combined deep learning with reinforcement learning for the first time at scale, learning to play Atari 2600 games at superhuman levels with no game-specific engineering. The work proved that a single architecture could master diverse tasks from raw sensory input, directly paving the way to AlphaGo and modern AI agents. Google acquired DeepMind for over $500 million just weeks later.