The Machine That Taught Itself to Play

A London startup's algorithm learned to master dozens of Atari games from raw pixels alone, proving machines could figure out complex tasks with no human instruction.

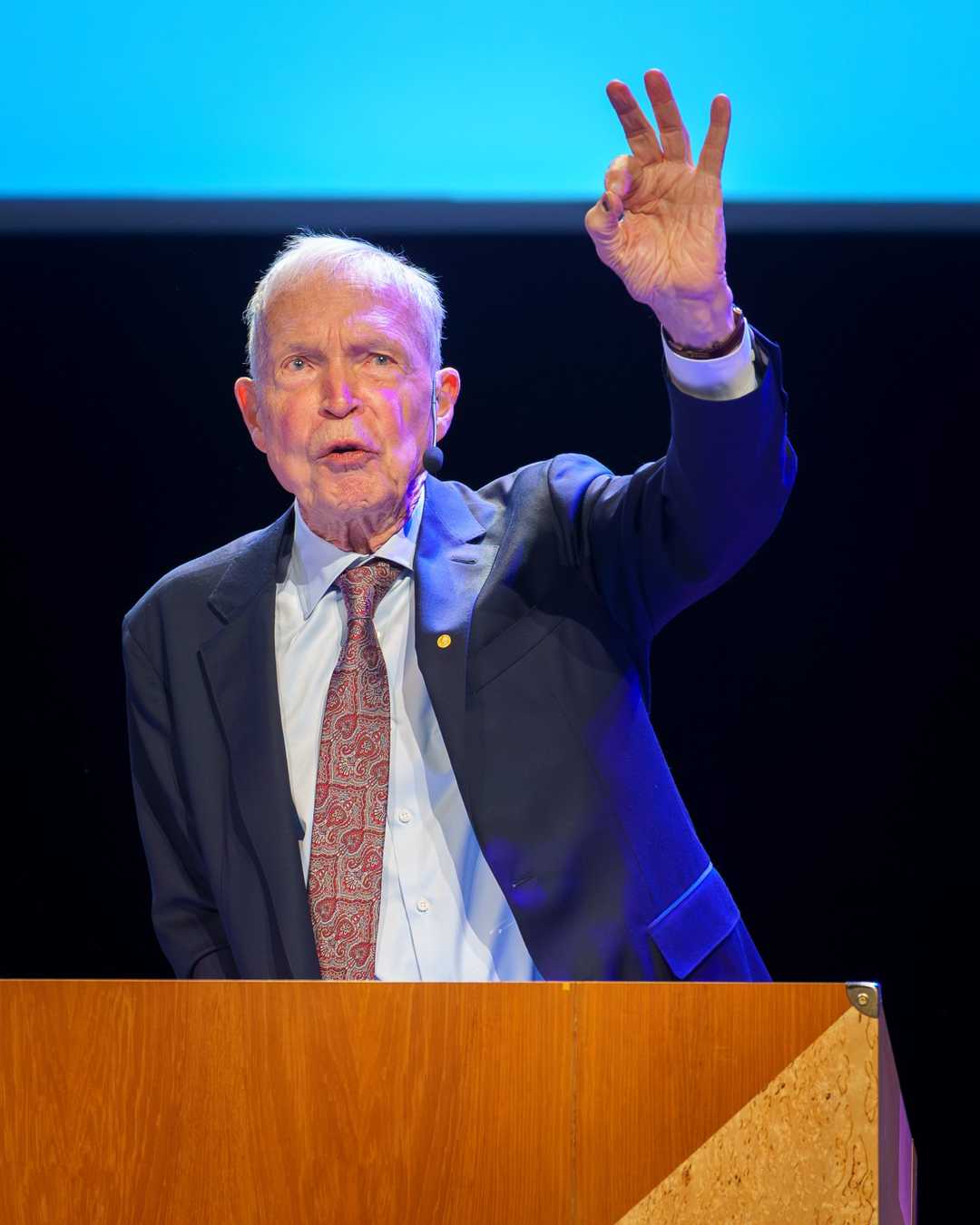

The only successful approach to AI so far has been to try to approximate what the brain does, and we think deep reinforcement learning represents the first significant step in that direction.

— Demis Hassabis

The Machine That Taught Itself to Play

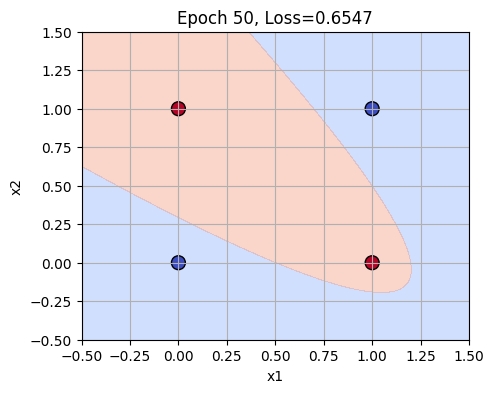

In 2015, a groundbreaking algorithm called the deep Q-network (DQN) emerged, marking a pivotal moment in the history of artificial intelligence.

What happened: In February 2015, a team of researchers at DeepMind, including Volodymyr Mnih, Koray Kavukcuoglu, David Silver, and Demis Hassabis, published their work on the deep Q-network (DQN) in the journal Nature. This algorithm was the first to successfully combine deep learning with reinforcement learning, enabling it to achieve human-level performance across a wide range of tasks. Notably, DQN could learn directly from high-dimensional sensory input, such as raw pixels from video games, without any task-specific engineering. Nature

Why it matters: The DQN’s ability to learn from unstructured data and perform complex tasks autonomously was a significant breakthrough. It not only reignited interest in reinforcement learning but also laid the groundwork for subsequent achievements like AlphaGo, which defeated a world champion at the game of Go just one year later. This milestone demonstrated the potential of deep reinforcement learning to solve real-world problems and opened up new avenues for research and application in robotics, healthcare, finance, and more.

Further reading:

Why This Mattered

DeepMind's deep Q-network (DQN), published in Nature in February 2015, was the first algorithm to combine deep learning with reinforcement learning and achieve human-level performance across a wide range of tasks. It demonstrated that a single architecture, with no task-specific engineering, could learn directly from high-dimensional sensory input — a breakthrough that reignited interest in reinforcement learning and laid the groundwork for AlphaGo just one year later.