The 175 Billion Parameter Surprise

OpenAI's GPT-3 demonstrated that scaling up language models could produce emergent abilities no one explicitly programmed, igniting the foundation model era.

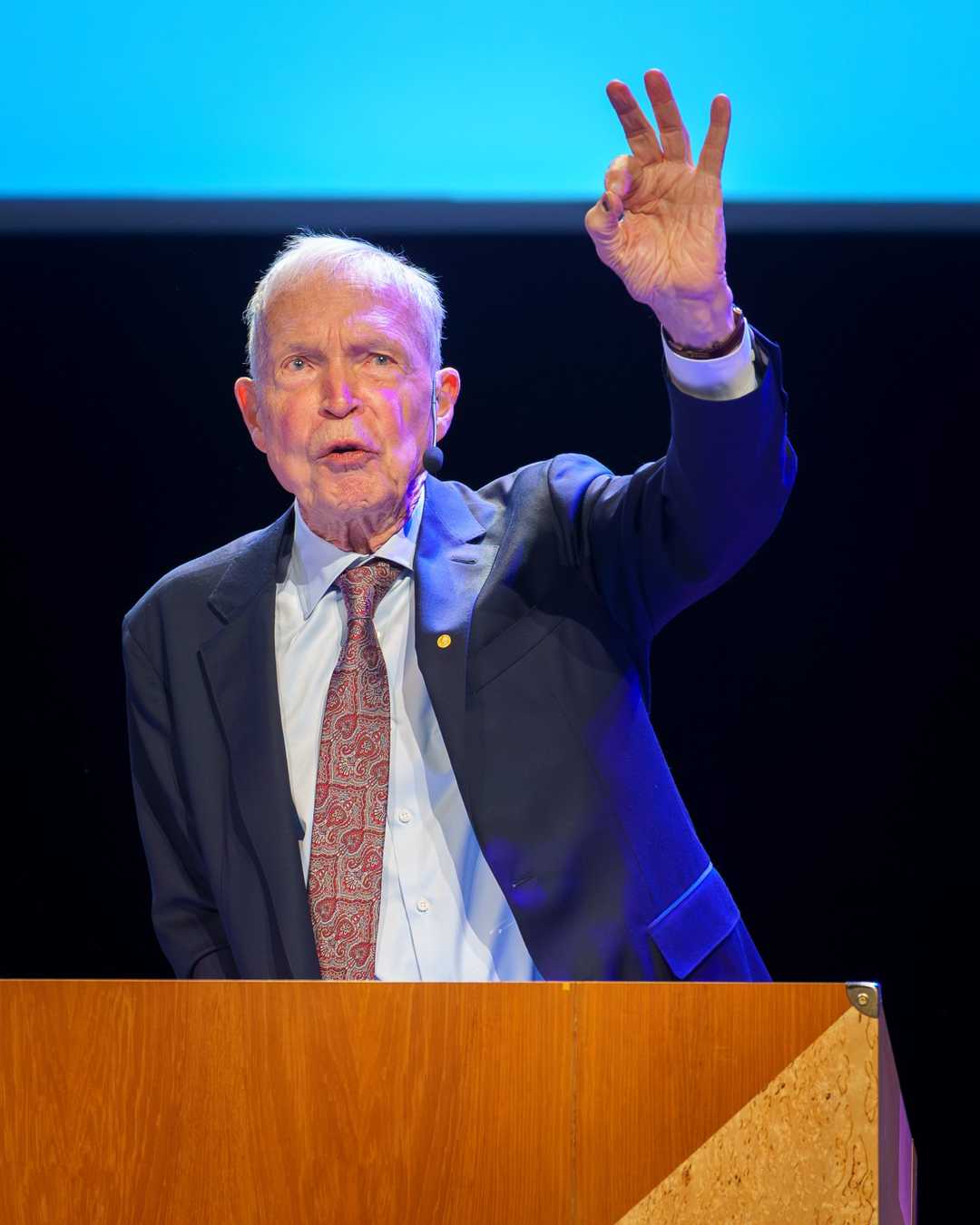

איתן ברוך / CC BY-SA 4.0

The thing that's really surprising is not any single capability, but the fact that all these capabilities emerge from one model trained with one objective.

— Ilya Sutskever

The 175 Billion Parameter Surprise (2020)

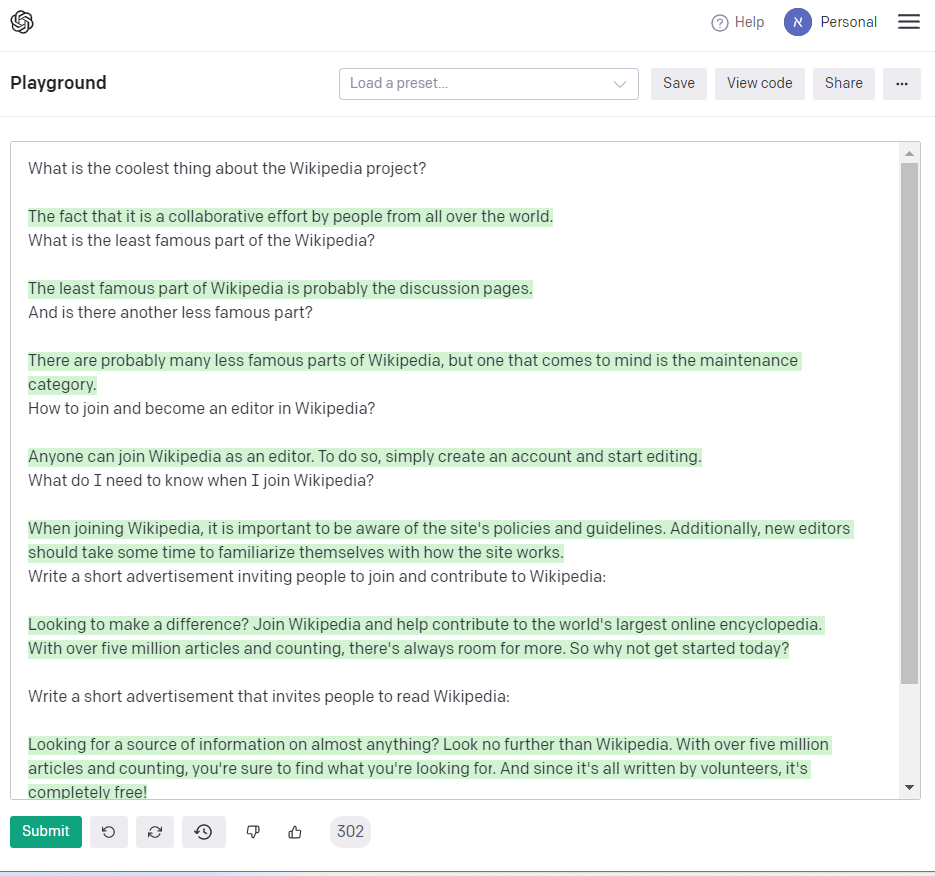

In 2020, the release of GPT-3 marked a significant milestone in the field of artificial intelligence, showcasing the power of scale in achieving generality.

What happened: On September 22, 2020, Microsoft announced that it had licensed GPT-3 exclusively, developed by Tom Brown, Benjamin Mann, Ilya Sutskever, and Sam Altman at OpenAI. This massive language model, with 175 billion parameters, demonstrated unprecedented capabilities in writing essays, translating languages, answering questions, and generating code, all without task-specific training. GPT-3 - Wikipedia

Why it matters: GPT-3’s release transformed the AI industry’s trajectory, proving that scale itself could be a path to generality. This breakthrough launched a wave of foundation-model startups and products, emphasizing the importance of large-scale models in advancing AI research and applications. Language Models are Few-Shot Learners (original paper)

Further reading:

Why This Mattered

GPT-3 showed that a single model trained on internet text could write essays, translate languages, answer questions, and even generate code — all without task-specific training. It transformed the AI industry's trajectory, proving that scale itself could be a path to generality and launching a wave of foundation-model startups and products.